Feedforward Networks

Feedforward Networks

Feedforward Network

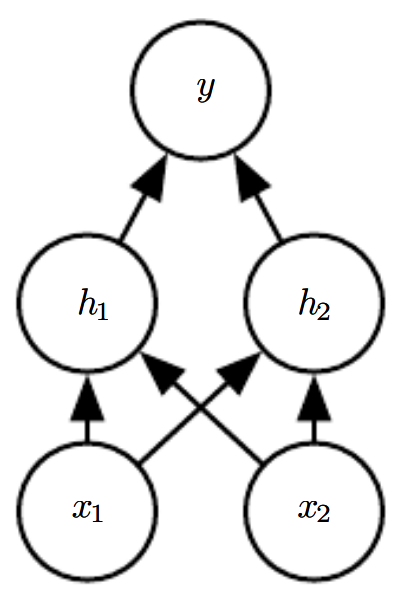

A Feedforward Network, or a Multilayer Perceptron (MLP), is a neural network with solely densely connected layers. This is the classic neural network architecture of the literature. It consists of inputs $x$ passed through units $h$ (of which there can be many layers) to predict a target $y$. Activation functions are generally chosen to be non-linear to allow for flexible functional approximation.

Image Source: Deep Learning, Goodfellow et al

Papers

| Paper | Code | Results | Date | Stars |

|---|

Tasks

| Task | Papers | Share |

|---|---|---|

| Object Detection | 69 | 8.86% |

| Self-Supervised Learning | 62 | 7.96% |

| Image Generation | 39 | 5.01% |

| Decoder | 35 | 4.49% |

| Semantic Segmentation | 27 | 3.47% |

| Image Classification | 21 | 2.70% |

| Disentanglement | 15 | 1.93% |

| Face Swapping | 13 | 1.67% |

| Language Modelling | 11 | 1.41% |

Dense Connections

Dense Connections