Attention

Attention

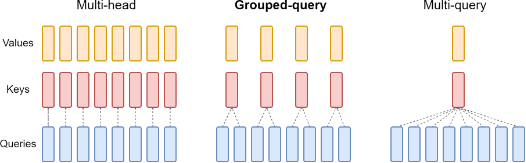

Grouped-query attention

Introduced by Ainslie et al. in GQA: Training Generalized Multi-Query Transformer Models from Multi-Head CheckpointsGrouped-query attention an interpolation of multi-query and multi-head attention that achieves quality close to multi-head at comparable speed to multi-query attention.

Source: GQA: Training Generalized Multi-Query Transformer Models from Multi-Head CheckpointsPapers

| Paper | Code | Results | Date | Stars |

|---|

Tasks

| Task | Papers | Share |

|---|---|---|

| Language Modelling | 4 | 15.38% |

| Question Answering | 3 | 11.54% |

| Arithmetic Reasoning | 2 | 7.69% |

| Code Generation | 2 | 7.69% |

| Math Word Problem Solving | 2 | 7.69% |

| Multi-task Language Understanding | 2 | 7.69% |

| Large Language Model | 1 | 3.85% |

| Text Classification | 1 | 3.85% |

| Text Generation | 1 | 3.85% |

Usage Over Time

Components

| Component | Type |

|

|---|---|---|

Feedforward Network

Feedforward Network

|

Feedforward Networks | |

Scaled Dot-Product Attention

Scaled Dot-Product Attention

|

Attention Mechanisms | |

Softmax

Softmax

|

Output Functions |