Search Results for author: Anton Osokin

Found 20 papers, 9 papers with code

Searching for Better Database Queries in the Outputs of Semantic Parsers

no code implementations • 13 Oct 2022 • Anton Osokin, Irina Saparina, Ramil Yarullin

The task of generating a database query from a question in natural language suffers from ambiguity and insufficiently precise description of the goal.

SPARQLing Database Queries from Intermediate Question Decompositions

1 code implementation • EMNLP 2021 • Irina Saparina, Anton Osokin

Our pipeline consists of two parts: a neural semantic parser that converts natural language questions into the intermediate representations and a non-trainable transpiler to the SPARQL query language (a standard language for accessing knowledge graphs and semantic web).

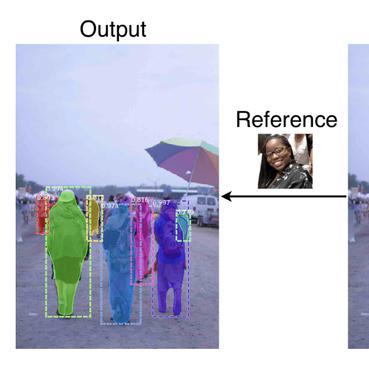

OS2D: One-Stage One-Shot Object Detection by Matching Anchor Features

1 code implementation • ECCV 2020 • Anton Osokin, Denis Sumin, Vasily Lomakin

In this paper, we consider the task of one-shot object detection, which consists in detecting objects defined by a single demonstration.

Cost-Sensitive Training for Autoregressive Models

no code implementations • 8 Dec 2019 • Irina Saparina, Anton Osokin

Training autoregressive models to better predict under the test metric, instead of maximizing the likelihood, has been reported to be beneficial in several use cases but brings additional complications, which prevent wider adoption.

Scaling Matters in Deep Structured-Prediction Models

no code implementations • 28 Feb 2019 • Aleksandr Shevchenko, Anton Osokin

In this paper, we hypothesize that one reason for joint training of deep energy-based models to fail is the incorrect relative normalization of different components in the energy function.

Tube-CNN: Modeling temporal evolution of appearance for object detection in video

no code implementations • 6 Dec 2018 • Tuan-Hung Vu, Anton Osokin, Ivan Laptev

Our goal in this paper is to learn discriminative models for the temporal evolution of object appearance and to use such models for object detection.

Marginal Weighted Maximum Log-likelihood for Efficient Learning of Perturb-and-Map models

no code implementations • 21 Nov 2018 • Tatiana Shpakova, Francis Bach, Anton Osokin

We consider the structured-output prediction problem through probabilistic approaches and generalize the "perturb-and-MAP" framework to more challenging weighted Hamming losses, which are crucial in applications.

Quantifying Learning Guarantees for Convex but Inconsistent Surrogates

no code implementations • NeurIPS 2018 • Kirill Struminsky, Simon Lacoste-Julien, Anton Osokin

We study consistency properties of machine learning methods based on minimizing convex surrogates.

Modeling Spatio-Temporal Human Track Structure for Action Localization

no code implementations • 28 Jun 2018 • Guilhem Chéron, Anton Osokin, Ivan Laptev, Cordelia Schmid

In order to localize actions in time, we propose a recurrent localization network (RecLNet) designed to model the temporal structure of actions on the level of person tracks.

GANs for Biological Image Synthesis

1 code implementation • ICCV 2017 • Anton Osokin, Anatole Chessel, Rafael E. Carazo Salas, Federico Vaggi

In this paper, we propose a novel application of Generative Adversarial Networks (GAN) to the synthesis of cells imaged by fluorescence microscopy.

SEARNN: Training RNNs with Global-Local Losses

1 code implementation • ICLR 2018 • Rémi Leblond, Jean-Baptiste Alayrac, Anton Osokin, Simon Lacoste-Julien

We propose SEARNN, a novel training algorithm for recurrent neural networks (RNNs) inspired by the "learning to search" (L2S) approach to structured prediction.

On Structured Prediction Theory with Calibrated Convex Surrogate Losses

1 code implementation • NeurIPS 2017 • Anton Osokin, Francis Bach, Simon Lacoste-Julien

We provide novel theoretical insights on structured prediction in the context of efficient convex surrogate loss minimization with consistency guarantees.

Minding the Gaps for Block Frank-Wolfe Optimization of Structured SVMs

no code implementations • 30 May 2016 • Anton Osokin, Jean-Baptiste Alayrac, Isabella Lukasewitz, Puneet K. Dokania, Simon Lacoste-Julien

In this paper, we propose several improvements on the block-coordinate Frank-Wolfe (BCFW) algorithm from Lacoste-Julien et al. (2013) recently used to optimize the structured support vector machine (SSVM) objective in the context of structured prediction, though it has wider applications.

Context-aware CNNs for person head detection

1 code implementation • ICCV 2015 • Tuan-Hung Vu, Anton Osokin, Ivan Laptev

First, we leverage person-scene relations and propose a Global CNN model trained to predict positions and scales of heads directly from the full image.

Tensorizing Neural Networks

4 code implementations • NeurIPS 2015 • Alexander Novikov, Dmitry Podoprikhin, Anton Osokin, Dmitry Vetrov

Deep neural networks currently demonstrate state-of-the-art performance in several domains.

Ranked #73 on

Image Classification

on MNIST

Ranked #73 on

Image Classification

on MNIST

Breaking Sticks and Ambiguities with Adaptive Skip-gram

3 code implementations • 25 Feb 2015 • Sergey Bartunov, Dmitry Kondrashkin, Anton Osokin, Dmitry Vetrov

Recently proposed Skip-gram model is a powerful method for learning high-dimensional word representations that capture rich semantic relationships between words.

Submodular relaxation for inference in Markov random fields

1 code implementation • 15 Jan 2015 • Anton Osokin, Dmitry Vetrov

In this paper we address the problem of finding the most probable state of a discrete Markov random field (MRF), also known as the MRF energy minimization problem.

Multi-utility Learning: Structured-output Learning with Multiple Annotation-specific Loss Functions

no code implementations • 23 Jun 2014 • Roman Shapovalov, Dmitry Vetrov, Anton Osokin, Pushmeet Kohli

Structured-output learning is a challenging problem; particularly so because of the difficulty in obtaining large datasets of fully labelled instances for training.

A Principled Deep Random Field Model for Image Segmentation

no code implementations • CVPR 2013 • Pushmeet Kohli, Anton Osokin, Stefanie Jegelka

We discuss a model for image segmentation that is able to overcome the short-boundary bias observed in standard pairwise random field based approaches.

Minimizing Sparse High-Order Energies by Submodular Vertex-Cover

no code implementations • NeurIPS 2012 • Andrew Delong, Olga Veksler, Anton Osokin, Yuri Boykov

Inference on high-order graphical models has become increasingly important in recent years.