Search Results for author: Daniel R. Jiang

Found 9 papers, 5 papers with code

Faster Approximate Dynamic Programming by Freezing Slow States

no code implementations • 3 Jan 2023 • Yijia Wang, Daniel R. Jiang

We consider infinite horizon Markov decision processes (MDPs) with fast-slow structure, meaning that certain parts of the state space move "fast" (and in a sense, are more influential) while other parts transition more "slowly."

Multi-Step Budgeted Bayesian Optimization with Unknown Evaluation Costs

1 code implementation • NeurIPS 2021 • Raul Astudillo, Daniel R. Jiang, Maximilian Balandat, Eytan Bakshy, Peter I. Frazier

To overcome the shortcomings of existing approaches, we propose the budgeted multi-step expected improvement, a non-myopic acquisition function that generalizes classical expected improvement to the setting of heterogeneous and unknown evaluation costs.

Efficient Nonmyopic Bayesian Optimization via One-Shot Multi-Step Trees

1 code implementation • NeurIPS 2020 • Shali Jiang, Daniel R. Jiang, Maximilian Balandat, Brian Karrer, Jacob R. Gardner, Roman Garnett

In this paper, we provide the first efficient implementation of general multi-step lookahead Bayesian optimization, formulated as a sequence of nested optimization problems within a multi-step scenario tree.

Lookahead-Bounded Q-Learning

1 code implementation • ICML 2020 • Ibrahim El Shar, Daniel R. Jiang

We introduce the lookahead-bounded Q-learning (LBQL) algorithm, a new, provably convergent variant of Q-learning that seeks to improve the performance of standard Q-learning in stochastic environments through the use of ``lookahead'' upper and lower bounds.

Dynamic Subgoal-based Exploration via Bayesian Optimization

1 code implementation • 21 Oct 2019 • Yijia Wang, Matthias Poloczek, Daniel R. Jiang

Reinforcement learning in sparse-reward navigation environments with expensive and limited interactions is challenging and poses a need for effective exploration.

BoTorch: A Framework for Efficient Monte-Carlo Bayesian Optimization

2 code implementations • NeurIPS 2020 • Maximilian Balandat, Brian Karrer, Daniel R. Jiang, Samuel Daulton, Benjamin Letham, Andrew Gordon Wilson, Eytan Bakshy

Bayesian optimization provides sample-efficient global optimization for a broad range of applications, including automatic machine learning, engineering, physics, and experimental design.

Feedback-Based Tree Search for Reinforcement Learning

no code implementations • ICML 2018 • Daniel R. Jiang, Emmanuel Ekwedike, Han Liu

Inspired by recent successes of Monte-Carlo tree search (MCTS) in a number of artificial intelligence (AI) application domains, we propose a model-based reinforcement learning (RL) technique that iteratively applies MCTS on batches of small, finite-horizon versions of the original infinite-horizon Markov decision process.

Model-based Reinforcement Learning

Model-based Reinforcement Learning

reinforcement-learning

+1

reinforcement-learning

+1

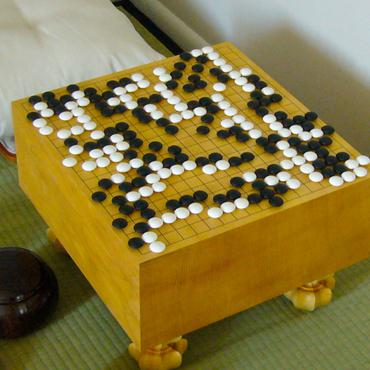

Monte Carlo Tree Search with Sampled Information Relaxation Dual Bounds

no code implementations • 20 Apr 2017 • Daniel R. Jiang, Lina Al-Kanj, Warren B. Powell

Monte Carlo Tree Search (MCTS), most famously used in game-play artificial intelligence (e. g., the game of Go), is a well-known strategy for constructing approximate solutions to sequential decision problems.

Risk-Averse Approximate Dynamic Programming with Quantile-Based Risk Measures

no code implementations • 7 Sep 2015 • Daniel R. Jiang, Warren B. Powell

In this paper, we consider a finite-horizon Markov decision process (MDP) for which the objective at each stage is to minimize a quantile-based risk measure (QBRM) of the sequence of future costs; we call the overall objective a dynamic quantile-based risk measure (DQBRM).