Search Results for author: Jaesik Park

Found 55 papers, 24 papers with code

Learning SO(3)-Invariant Semantic Correspondence via Local Shape Transform

no code implementations • 17 Apr 2024 • Chunghyun Park, SeungWook Kim, Jaesik Park, Minsu Cho

Establishing accurate 3D correspondences between shapes stands as a pivotal challenge with profound implications for computer vision and robotics.

3Doodle: Compact Abstraction of Objects with 3D Strokes

no code implementations • 6 Feb 2024 • Changwoon Choi, Jaeah Lee, Jaesik Park, Young Min Kim

We propose 3Dooole, generating descriptive and view-consistent sketch images given multi-view images of the target object.

Stable and Consistent Prediction of 3D Characteristic Orientation via Invariant Residual Learning

no code implementations • 20 Jun 2023 • SeungWook Kim, Chunghyun Park, Yoonwoo Jeong, Jaesik Park, Minsu Cho

Learning to predict reliable characteristic orientations of 3D point clouds is an important yet challenging problem, as different point clouds of the same class may have largely varying appearances.

Extending CLIP's Image-Text Alignment to Referring Image Segmentation

1 code implementation • 14 Jun 2023 • Seoyeon Kim, Minguk Kang, Dongwon Kim, Jaesik Park, Suha Kwak

Referring Image Segmentation (RIS) is a cross-modal task that aims to segment an instance described by a natural language expression.

Ranked #3 on

Referring Expression Segmentation

on RefCOCO testA

(using extra training data)

Ranked #3 on

Referring Expression Segmentation

on RefCOCO testA

(using extra training data)

Binary Radiance Fields

no code implementations • NeurIPS 2023 • Seungjoo Shin, Jaesik Park

In this paper, we propose \textit{binary radiance fields} (BiRF), a storage-efficient radiance field representation employing binary feature encoding that encodes local features using binary encoding parameters in a format of either $+1$ or $-1$.

Fill-Up: Balancing Long-Tailed Data with Generative Models

no code implementations • 12 Jun 2023 • Joonghyuk Shin, Minguk Kang, Jaesik Park

Modern text-to-image synthesis models have achieved an exceptional level of photorealism, generating high-quality images from arbitrary text descriptions.

Instant Domain Augmentation for LiDAR Semantic Segmentation

no code implementations • CVPR 2023 • Kwonyoung Ryu, Soonmin Hwang, Jaesik Park

Despite the increasing popularity of LiDAR sensors, perception algorithms using 3D LiDAR data struggle with the 'sensor-bias problem'.

Scaling up GANs for Text-to-Image Synthesis

1 code implementation • CVPR 2023 • Minguk Kang, Jun-Yan Zhu, Richard Zhang, Jaesik Park, Eli Shechtman, Sylvain Paris, Taesung Park

From a technical standpoint, it also marked a drastic change in the favored architecture to design generative image models.

Ranked #18 on

Image Generation

on ImageNet 256x256

Ranked #18 on

Image Generation

on ImageNet 256x256

Spacetime Surface Regularization for Neural Dynamic Scene Reconstruction

no code implementations • ICCV 2023 • Jaesung Choe, Christopher Choy, Jaesik Park, In So Kweon, Anima Anandkumar

We propose an algorithm, 4DRegSDF, for the spacetime surface regularization to improve the fidelity of neural rendering and reconstruction in dynamic scenes.

Instance-Aware Image Completion

no code implementations • 22 Oct 2022 • Jinoh Cho, Minguk Kang, Vibhav Vineet, Jaesik Park

However, existing image completion methods tend to fill in the missing region with the surrounding texture instead of hallucinating a visual instance that is suitable in accordance with the context of the scene.

Substructure-Atom Cross Attention for Molecular Representation Learning

no code implementations • 15 Oct 2022 • Jiye Kim, Seungbeom Lee, Dongwoo Kim, Sungsoo Ahn, Jaesik Park

Designing a neural network architecture for molecular representation is crucial for AI-driven drug discovery and molecule design.

Sequential Brick Assembly with Efficient Constraint Satisfaction

no code implementations • 3 Oct 2022 • Seokjun Ahn, Jungtaek Kim, Minsu Cho, Jaesik Park

The assembly problem is challenging since the number of possible structures increases exponentially with the number of available bricks, complicating the physical constraints to satisfy across bricks.

Style-Agnostic Reinforcement Learning

1 code implementation • 31 Aug 2022 • Juyong Lee, Seokjun Ahn, Jaesik Park

We present a novel method of learning style-agnostic representation using both style transfer and adversarial learning in the reinforcement learning framework.

PeRFception: Perception using Radiance Fields

1 code implementation • 24 Aug 2022 • Yoonwoo Jeong, Seungjoo Shin, Junha Lee, Christopher Choy, Animashree Anandkumar, Minsu Cho, Jaesik Park

The recent progress in implicit 3D representation, i. e., Neural Radiance Fields (NeRFs), has made accurate and photorealistic 3D reconstruction possible in a differentiable manner.

Learning to Register Unbalanced Point Pairs

no code implementations • 9 Jul 2022 • Kanghee Lee, Junha Lee, Jaesik Park

The proposed method first predicts subregions within target point cloud that are likely to be overlapped with query.

Learning Debiased Classifier with Biased Committee

1 code implementation • 22 Jun 2022 • Nayeong Kim, Sehyun Hwang, Sungsoo Ahn, Jaesik Park, Suha Kwak

We propose a new method for training debiased classifiers with no spurious attribute label.

StudioGAN: A Taxonomy and Benchmark of GANs for Image Synthesis

2 code implementations • 19 Jun 2022 • Minguk Kang, Joonghyuk Shin, Jaesik Park

Generative Adversarial Network (GAN) is one of the state-of-the-art generative models for realistic image synthesis.

A Rotated Hyperbolic Wrapped Normal Distribution for Hierarchical Representation Learning

1 code implementation • 25 May 2022 • Seunghyuk Cho, Juyong Lee, Jaesik Park, Dongwoo Kim

We present a rotated hyperbolic wrapped normal distribution (RoWN), a simple yet effective alteration of a hyperbolic wrapped normal distribution (HWN).

Learning to Assemble Geometric Shapes

1 code implementation • 24 May 2022 • Jinhwi Lee, Jungtaek Kim, Hyunsoo Chung, Jaesik Park, Minsu Cho

Assembling parts into an object is a combinatorial problem that arises in a variety of contexts in the real world and involves numerous applications in science and engineering.

3D Scene Painting via Semantic Image Synthesis

no code implementations • CVPR 2022 • Jaebong Jeong, Janghun Jo, Sunghyun Cho, Jaesik Park

Our approach takes a 3D scene with semantic class labels as input and trains a 3D scene painting network that synthesizes color values for the input 3D scene.

Fast Point Transformer

1 code implementation • CVPR 2022 • Chunghyun Park, Yoonwoo Jeong, Minsu Cho, Jaesik Park

The recent success of neural networks enables a better interpretation of 3D point clouds, but processing a large-scale 3D scene remains a challenging problem.

Ranked #24 on

Semantic Segmentation

on S3DIS

Ranked #24 on

Semantic Segmentation

on S3DIS

Revisiting LiDAR Registration and Reconstruction: A Range Image Perspective

no code implementations • 6 Dec 2021 • Wei Dong, Kwonyoung Ryu, Michael Kaess, Jaesik Park

We further collect a dataset of indoor and outdoor LiDAR scenes in the posed range image format.

Putting 3D Spatially Sparse Networks on a Diet

no code implementations • 2 Dec 2021 • Junha Lee, Christopher Choy, Jaesik Park

3D neural networks have become prevalent for many 3D vision tasks including object detection, segmentation, registration, and various perception tasks for 3D inputs.

Instance-wise Occlusion and Depth Orders in Natural Scenes

1 code implementation • CVPR 2022 • Hyunmin Lee, Jaesik Park

The dataset consists of 2. 9M annotations of geometric orderings for class-labeled instances in 101K natural scenes.

Deep Point Cloud Reconstruction

no code implementations • ICLR 2022 • Jaesung Choe, Byeongin Joung, Francois Rameau, Jaesik Park, In So Kweon

In particular, we further improve the performance of transformer by a newly proposed module called amplified positional encoding.

PointMixer: MLP-Mixer for Point Cloud Understanding

2 code implementations • 22 Nov 2021 • Jaesung Choe, Chunghyun Park, Francois Rameau, Jaesik Park, In So Kweon

MLP-Mixer has newly appeared as a new challenger against the realm of CNNs and transformer.

Ranked #19 on

Semantic Segmentation

on S3DIS Area5

Ranked #19 on

Semantic Segmentation

on S3DIS Area5

Self-Supervised Real-time Video Stabilization

no code implementations • 10 Nov 2021 • Jinsoo Choi, Jaesik Park, In So Kweon

Videos are a popular media form, where online video streaming has recently gathered much popularity.

Rebooting ACGAN: Auxiliary Classifier GANs with Stable Training

1 code implementation • NeurIPS 2021 • Minguk Kang, Woohyeon Shim, Minsu Cho, Jaesik Park

On this foundation, we propose the Rebooted Auxiliary Classifier Generative Adversarial Network (ReACGAN).

Ranked #1 on

Image Generation

on CIFAR-10

(NFE metric)

Ranked #1 on

Image Generation

on CIFAR-10

(NFE metric)

Brick-by-Brick: Combinatorial Construction with Deep Reinforcement Learning

no code implementations • NeurIPS 2021 • Hyunsoo Chung, Jungtaek Kim, Boris Knyazev, Jinhwi Lee, Graham W. Taylor, Jaesik Park, Minsu Cho

Discovering a solution in a combinatorial space is prevalent in many real-world problems but it is also challenging due to diverse complex constraints and the vast number of possible combinations.

HUMBI: A Large Multiview Dataset of Human Body Expressions and Benchmark Challenge

no code implementations • 30 Sep 2021 • Jae Shin Yoon, Zhixuan Yu, Jaesik Park, Hyun Soo Park

We demonstrate that HUMBI is highly effective in learning and reconstructing a complete human model and is complementary to the existing datasets of human body expressions with limited views and subjects such as MPII-Gaze, Multi-PIE, Human3. 6M, and Panoptic Studio datasets.

Efficient Point Transformer for Large-scale 3D Scene Understanding

no code implementations • 29 Sep 2021 • Chunghyun Park, Yoonwoo Jeong, Minsu Cho, Jaesik Park

Although sparse convolution is efficient and scalable for large 3D scenes, the quantization artifacts impair geometric details and degrade prediction accuracy.

Deep Hough Voting for Robust Global Registration

no code implementations • ICCV 2021 • Junha Lee, SeungWook Kim, Minsu Cho, Jaesik Park

We then construct a set of triplets of correspondences to cast votes on the 6D Hough space, representing the transformation parameters in sparse tensors.

Self-Calibrating Neural Radiance Fields

1 code implementation • ICCV 2021 • Yoonwoo Jeong, Seokjun Ahn, Christopher Choy, Animashree Anandkumar, Minsu Cho, Jaesik Park

We also propose a new geometric loss function, viz., projected ray distance loss, to incorporate geometric consistency for complex non-linear camera models.

Realistic Image Synthesis with Configurable 3D Scene Layouts

no code implementations • 23 Aug 2021 • Jaebong Jeong, Janghun Jo, Jingdong Wang, Sunghyun Cho, Jaesik Park

Our approach takes a 3D scene with semantic class labels as input and trains a 3D scene painting network that synthesizes color values for the input 3D scene.

Spatiotemporal Texture Reconstruction for Dynamic Objects Using a Single RGB-D Camera

no code implementations • 20 Aug 2021 • Hyomin Kim, Jungeon Kim, Hyeonseo Nam, Jaesik Park, Seungyong Lee

This paper presents an effective method for generating a spatiotemporal (time-varying) texture map for a dynamic object using a single RGB-D camera.

Deep Virtual Markers for Articulated 3D Shapes

1 code implementation • ICCV 2021 • Hyomin Kim, Jungeon Kim, Jaewon Kam, Jaesik Park, Seungyong Lee

We propose deep virtual markers, a framework for estimating dense and accurate positional information for various types of 3D data.

Fragment Relation Networks for Geometric Shape Assembly

no code implementations • NeurIPS Workshop LMCA 2020 • Jinhwi Lee, Jungtaek Kim, Hyunsoo Chung, Jaesik Park, Minsu Cho

Our model processes the candidate fragments in a permutation-equivariant manner and can generalize to cases with an arbitrary number of fragments and even with a different target object.

ContraGAN: Contrastive Learning for Conditional Image Generation

1 code implementation • NeurIPS 2020 • Minguk Kang, Jaesik Park

The discriminator of ContraGAN discriminates the authenticity of given samples and minimizes a contrastive objective to learn the relations between training images.

Ranked #11 on

Conditional Image Generation

on CIFAR-10

Ranked #11 on

Conditional Image Generation

on CIFAR-10

High-dimensional Convolutional Networks for Geometric Pattern Recognition

3 code implementations • CVPR 2020 • Christopher Choy, Junha Lee, Rene Ranftl, Jaesik Park, Vladlen Koltun

Many problems in science and engineering can be formulated in terms of geometric patterns in high-dimensional spaces.

Combinatorial 3D Shape Generation via Sequential Assembly

3 code implementations • 16 Apr 2020 • Jungtaek Kim, Hyunsoo Chung, Jinhwi Lee, Minsu Cho, Jaesik Park

To alleviate this consequence induced by a huge number of feasible combinations, we propose a combinatorial 3D shape generation framework.

Future Video Synthesis with Object Motion Prediction

1 code implementation • CVPR 2020 • Yue Wu, Rongrong Gao, Jaesik Park, Qifeng Chen

We present an approach to predict future video frames given a sequence of continuous video frames in the past.

Ranked #2 on

Video Prediction

on Cityscapes

(using extra training data)

Ranked #2 on

Video Prediction

on Cityscapes

(using extra training data)

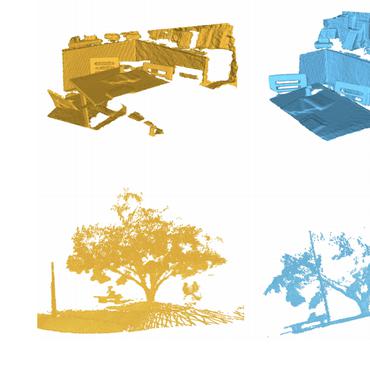

Fully Convolutional Geometric Features

1 code implementation • International Conference on Computer vision 2019 • Christopher Choy, Jaesik Park, Vladlen Koltun

Extracting geometric features from 3D scans or point clouds is the first step in applications such as registration, reconstruction, and tracking.

Ranked #1 on

3D Feature Matching

on 3DMatch Benchmark

Ranked #1 on

3D Feature Matching

on 3DMatch Benchmark

HUMBI: A Large Multiview Dataset of Human Body Expressions

1 code implementation • CVPR 2020 • Zhixuan Yu, Jae Shin Yoon, In Kyu Lee, Prashanth Venkatesh, Jaesik Park, Jihun Yu, Hyun Soo Park

This paper presents a new large multiview dataset called HUMBI for human body expressions with natural clothing.

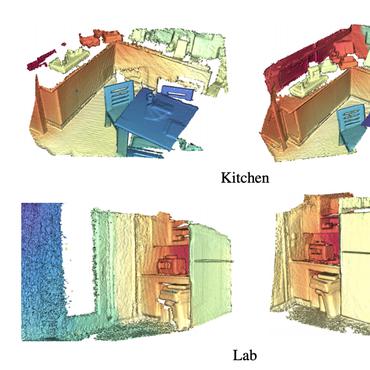

Tangent Convolutions for Dense Prediction in 3D

1 code implementation • CVPR 2018 • Maxim Tatarchenko, Jaesik Park, Vladlen Koltun, Qian-Yi Zhou

Our approach is based on tangent convolutions - a new construction for convolutional networks on 3D data.

Ranked #2 on

Semantic Segmentation

on S3DIS Area5

(Number of params metric)

Ranked #2 on

Semantic Segmentation

on S3DIS Area5

(Number of params metric)

Open3D: A Modern Library for 3D Data Processing

14 code implementations • 30 Jan 2018 • Qian-Yi Zhou, Jaesik Park, Vladlen Koltun

The Open3D frontend exposes a set of carefully selected data structures and algorithms in both C++ and Python.

Colored Point Cloud Registration Revisited

no code implementations • ICCV 2017 • Jaesik Park, Qian-Yi Zhou, Vladlen Koltun

We present an algorithm for tightly aligning two colored point clouds.

Fast Global Registration

1 code implementation • ECCV 2016 • Qian-Yi Zhou, Jaesik Park, Vladlen Koltun

Extensive experiments demonstrate that the presented approach matches or exceeds the accuracy of state-of-the-art global registration pipelines, while being at least an order of magnitude faster.

Refining Geometry from Depth Sensors using IR Shading Images

no code implementations • 18 Aug 2016 • Gyeongmin Choe, Jaesik Park, Yu-Wing Tai, In So Kweon

To resolve the ambiguity in our model between the normals and distances, we utilize an initial 3D mesh from the Kinect fusion and multi-view information to reliably estimate surface details that were not captured and reconstructed by the Kinect fusion.

High-Quality Depth From Uncalibrated Small Motion Clip

1 code implementation • CVPR 2016 • Hyowon Ha, Sunghoon Im, Jaesik Park, Hae-Gon Jeon, In So Kweon

We propose a novel approach that generates a high-quality depth map from a set of images captured with a small viewpoint variation, namely small motion clip.

Efficient and Robust Color Consistency for Community Photo Collections

no code implementations • CVPR 2016 • Jaesik Park, Yu-Wing Tai, Sudipta N. Sinha, In So Kweon

We present a robust low-rank matrix factorization method to estimate the unknown parameters of this model.

Vision System and Depth Processing for DRC-HUBO+

no code implementations • 21 Sep 2015 • Inwook Shim, Seunghak Shin, Yunsu Bok, Kyungdon Joo, Dong-Geol Choi, Joon-Young Lee, Jaesik Park, Jun-Ho Oh, In So Kweon

This paper presents a vision system and a depth processing algorithm for DRC-HUBO+, the winner of the DRC finals 2015.

Accurate Depth Map Estimation From a Lenslet Light Field Camera

no code implementations • CVPR 2015 • Hae-Gon Jeon, Jaesik Park, Gyeongmin Choe, Jinsun Park, Yunsu Bok, Yu-Wing Tai, In So Kweon

This paper introduces an algorithm that accurately estimates depth maps using a lenslet light field camera.

Multispectral Pedestrian Detection: Benchmark Dataset and Baseline

no code implementations • CVPR 2015 • Soonmin Hwang, Jaesik Park, Namil Kim, Yukyung Choi, In So Kweon

With this dataset, we introduce multispectral ACF, which is an extension of aggregated channel features (ACF) to simultaneously handle color-thermal image pairs.

Exploiting Shading Cues in Kinect IR Images for Geometry Refinement

no code implementations • CVPR 2014 • Gyeongmin Choe, Jaesik Park, Yu-Wing Tai, In So Kweon

To resolve ambiguity in our model between normals and distance, we utilize an initial 3D mesh from the Kinect fusion and multi-view information to reliably estimate surface details that were not reconstructed by the Kinect fusion.

Calibrating a Non-isotropic Near Point Light Source using a Plane

no code implementations • CVPR 2014 • Jaesik Park, Sudipta N. Sinha, Yasuyuki Matsushita, Yu-Wing Tai, In So Kweon

We show that a non-isotropic near point light source rigidly attached to a camera can be calibrated using multiple images of a weakly textured planar scene.