Search Results for author: Wenqing Chen

Found 20 papers, 1 papers with code

Exploring Logically Dependent Multi-task Learning with Causal Inference

no code implementations • EMNLP 2020 • Wenqing Chen, Jidong Tian, Liqiang Xiao, Hao He, Yaohui Jin

In the field of causal inference, GS in our model is essentially a counterfactual reasoning process, trying to estimate the causal effect between tasks and utilize it to improve MTL.

Diagnosing the First-Order Logical Reasoning Ability Through LogicNLI

no code implementations • EMNLP 2021 • Jidong Tian, Yitian Li, Wenqing Chen, Liqiang Xiao, Hao He, Yaohui Jin

Recently, language models (LMs) have achieved significant performance on many NLU tasks, which has spurred widespread interest for their possible applications in the scientific and social area.

To What Extent Do Natural Language Understanding Datasets Correlate to Logical Reasoning? A Method for Diagnosing Logical Reasoning.

no code implementations • COLING 2022 • Yitian Li, Jidong Tian, Wenqing Chen, Caoyun Fan, Hao He, Yaohui Jin

In this paper, we propose a systematic method to diagnose the correlations between an NLU dataset and a specific skill, and then take a fundamental reasoning skill, logical reasoning, as an example for analysis.

LLM-Guided Multi-View Hypergraph Learning for Human-Centric Explainable Recommendation

no code implementations • 16 Jan 2024 • Zhixuan Chu, Yan Wang, Qing Cui, Longfei Li, Wenqing Chen, Zhan Qin, Kui Ren

As personalized recommendation systems become vital in the age of information overload, traditional methods relying solely on historical user interactions often fail to fully capture the multifaceted nature of human interests.

Chain-of-Thought Tuning: Masked Language Models can also Think Step By Step in Natural Language Understanding

no code implementations • 18 Oct 2023 • Caoyun Fan, Jidong Tian, Yitian Li, Wenqing Chen, Hao He, Yaohui Jin

From the perspective of CoT, CoTT's two-step framework enables MLMs to implement task decomposition; CoTT's prompt tuning allows intermediate steps to be used in natural language form.

Accurate Use of Label Dependency in Multi-Label Text Classification Through the Lens of Causality

no code implementations • 11 Oct 2023 • Caoyun Fan, Wenqing Chen, Jidong Tian, Yitian Li, Hao He, Yaohui Jin

In this study, we attribute the bias to the model's misuse of label dependency, i. e., the model tends to utilize the correlation shortcut in label dependency rather than fusing text information and label dependency for prediction.

Unlock the Potential of Counterfactually-Augmented Data in Out-Of-Distribution Generalization

no code implementations • 10 Oct 2023 • Caoyun Fan, Wenqing Chen, Jidong Tian, Yitian Li, Hao He, Yaohui Jin

Counterfactually-Augmented Data (CAD) -- minimal editing of sentences to flip the corresponding labels -- has the potential to improve the Out-Of-Distribution (OOD) generalization capability of language models, as CAD induces language models to exploit domain-independent causal features and exclude spurious correlations.

Improving the Out-Of-Distribution Generalization Capability of Language Models: Counterfactually-Augmented Data is not Enough

no code implementations • 18 Feb 2023 • Caoyun Fan, Wenqing Chen, Jidong Tian, Yitian Li, Hao He, Yaohui Jin

Counterfactually-Augmented Data (CAD) has the potential to improve language models' Out-Of-Distribution (OOD) generalization capability, as CAD induces language models to exploit causal features and exclude spurious correlations.

MaxGNR: A Dynamic Weight Strategy via Maximizing Gradient-to-Noise Ratio for Multi-Task Learning

no code implementations • 18 Feb 2023 • Caoyun Fan, Wenqing Chen, Jidong Tian, Yitian Li, Hao He, Yaohui Jin

A series of studies point out that too much gradient noise would lead to performance degradation in STL, however, in the MTL scenario, Inter-Task Gradient Noise (ITGN) is an additional source of gradient noise for each task, which can also affect the optimization process.

De-Confounded Variational Encoder-Decoder for Logical Table-to-Text Generation

no code implementations • ACL 2021 • Wenqing Chen, Jidong Tian, Yitian Li, Hao He, Yaohui Jin

The task remains challenging where deep learning models often generated linguistically fluent but logically inconsistent text.

Dependent Multi-Task Learning with Causal Intervention for Image Captioning

no code implementations • 18 May 2021 • Wenqing Chen, Jidong Tian, Caoyun Fan, Hao He, Yaohui Jin

The intermediate task would help the model better understand the visual features and thus alleviate the content inconsistency problem.

Evidence of topological nodal lines and surface states in the centrosymmetric superconductor SnTaS2

no code implementations • 7 Dec 2020 • Wenqing Chen, Lulu Liu, Wentao Yang, Dong Chen, Zhengtai Liu, Yaobo Huang, Tong Zhang, Haijun Zhang, Zhonghao Liu, D. W. Shen

Utilizing angle-resolved photoemission spectroscopy and first-principles calculations, here, we demonstrate the existence of topological nodal-line states and drumheadlike surface states in centrosymmetric superconductor SnTaS2, which is a type-II superconductor with a critical transition temperature of about 3 K. The valence bands from Ta 5d orbitals and the conduction bands from Sn 5p orbitals cross each other, forming two nodal lines in the vicinity of the Fermi energy without the inclusion of spin-orbit coupling (SOC), protected by the spatial-inversion symmetry and time-reversal symmetry.

Superconductivity

A Semantically Consistent and Syntactically Variational Encoder-Decoder Framework for Paraphrase Generation

no code implementations • COLING 2020 • Wenqing Chen, Jidong Tian, Liqiang Xiao, Hao He, Yaohui Jin

In this paper, we propose a semantically consistent and syntactically variational encoder-decoder framework, which uses adversarial learning to ensure the syntactic latent variable be semantic-free.

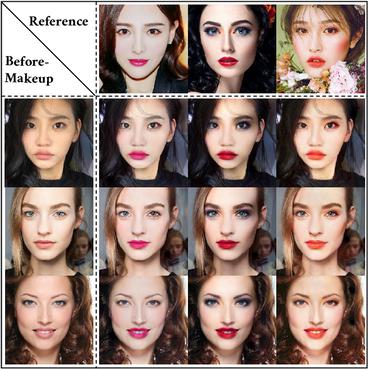

Disentangled Makeup Transfer with Generative Adversarial Network

1 code implementation • 2 Jul 2019 • Honglun Zhang, Wenqing Chen, Hao He, Yaohui Jin

Facial makeup transfer is a widely-used technology that aims to transfer the makeup style from a reference face image to a non-makeup face.

DS-VIO: Robust and Efficient Stereo Visual Inertial Odometry based on Dual Stage EKF

no code implementations • 2 May 2019 • Xiaogang Xiong, Wenqing Chen, Zhichao Liu, Qiang Shen

This paper presents a dual stage EKF (Extended Kalman Filter)-based algorithm for the real-time and robust stereo VIO (visual inertial odometry).

Show, Attend and Translate: Unpaired Multi-Domain Image-to-Image Translation with Visual Attention

no code implementations • 19 Nov 2018 • Honglun Zhang, Wenqing Chen, Jidong Tian, Yongkun Wang, Yaohui Jin

Recently unpaired multi-domain image-to-image translation has attracted great interests and obtained remarkable progress, where a label vector is utilized to indicate multi-domain information.

MCapsNet: Capsule Network for Text with Multi-Task Learning

no code implementations • EMNLP 2018 • Liqiang Xiao, Honglun Zhang, Wenqing Chen, Yongkun Wang, Yaohui Jin

Multi-task learning has an ability to share the knowledge among related tasks and implicitly increase the training data.

Learning What to Share: Leaky Multi-Task Network for Text Classification

no code implementations • COLING 2018 • Liqiang Xiao, Honglun Zhang, Wenqing Chen, Yongkun Wang, Yaohui Jin

Neural network based multi-task learning has achieved great success on many NLP problems, which focuses on sharing knowledge among tasks by linking some layers to enhance the performance.

Gated Multi-Task Network for Text Classification

no code implementations • NAACL 2018 • Liqiang Xiao, Honglun Zhang, Wenqing Chen

This success can be largely attributed to the feature sharing by fusing some layers among tasks.

Multi-Task Label Embedding for Text Classification

no code implementations • EMNLP 2018 • Honglun Zhang, Liqiang Xiao, Wenqing Chen, Yongkun Wang, Yaohui Jin

Multi-task learning in text classification leverages implicit correlations among related tasks to extract common features and yield performance gains.