Content4All

Introduced by Camgoz et al. in Content4All Open Research Sign Language Translation DatasetsContent4All is a collection of six open research datasets aimed at automatic sign language translation research.

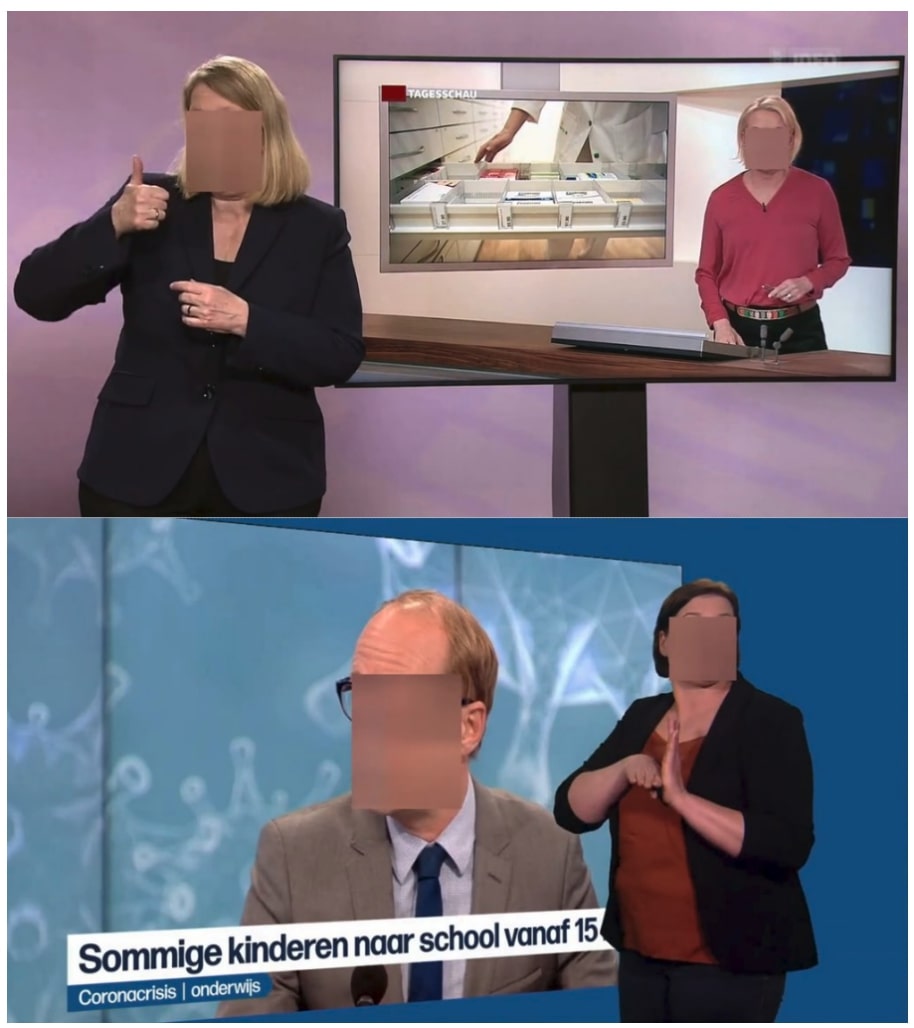

Sign language interpretation footage was captured by the broadcast partners SWISSTXT and VRT. Raw footage was anonymized and processed to extract 2D and 3D human body pose information. From the roughly 190 hours of processed data, three base (RAW) datasets were released, namely 1) SWISSTXT-RAW-NEWS, 2) SWISSTXT-RAW-WEATHER and 3) VRT-RAW. Each dataset contains sign language interpretations, corresponding spoken language subtitles, and extracted 2D/3D human pose information.

A subset from each base dataset was selected and manually annotated to align spoken language subtitles and sign language interpretations. The subset selection was done to resemble the benchmark Phoenix 2014T dataset. Our aim is for these three new annotated public datasets, namely 4) SWISSTXT-NEWS, 5) SWISSTXT-WEATHER and 6) VRT-NEWS to become benchmarks and underpin future research as the field moves closer to translation and production on larger domains of discourse.

Papers

| Paper | Code | Results | Date | Stars |

|---|