WikiHop

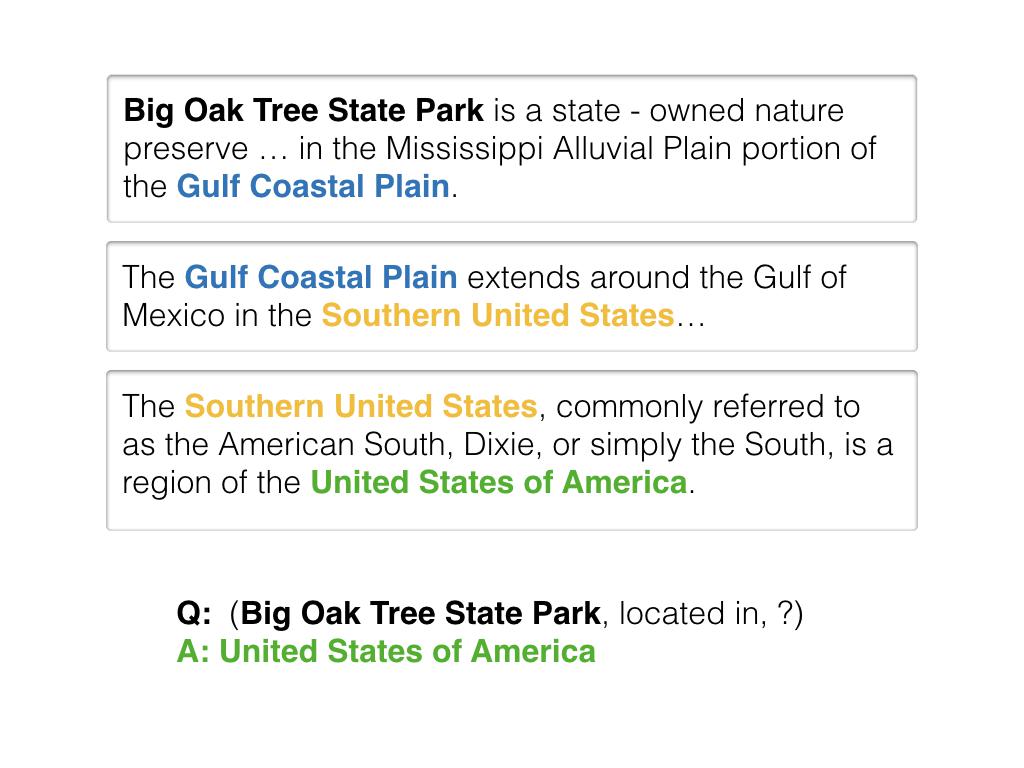

Introduced by Welbl et al. in Constructing Datasets for Multi-hop Reading Comprehension Across DocumentsWikiHop is a multi-hop question-answering dataset. The query of WikiHop is constructed with entities and relations from WikiData, while supporting documents are from WikiReading. A bipartite graph connecting entities and documents is first built and the answer for each query is located by traversal on this graph. Candidates that are type-consistent with the answer and share the same relation in query with the answer are included, resulting in a set of candidates. Thus, WikiHop is a multi-choice style reading comprehension data set. There are totally about 43K samples in training set, 5K samples in development set and 2.5K samples in test set. The test set is not provided. The task is to predict the correct answer given a query and multiple supporting documents.

The dataset includes a masked variant, where all candidates and their mentions in the supporting documents are replaced by random but consistent placeholder tokens.

Source: Multi-hop Reading Comprehension across Multiple Documents by Reasoning over Heterogeneous GraphsBenchmarks

Papers

| Paper | Code | Results | Date | Stars |

|---|