Feature Pyramid Blocks

Feature Pyramid Blocks

Balanced Feature Pyramid

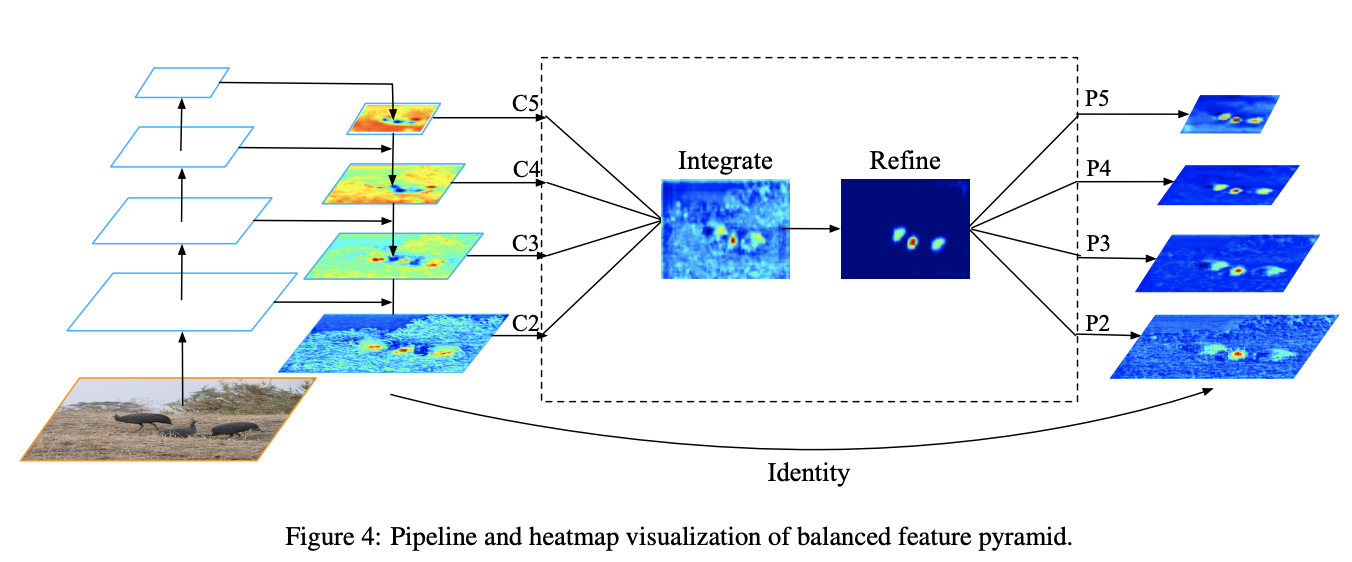

Introduced by Pang et al. in Libra R-CNN: Towards Balanced Learning for Object DetectionBalanced Feature Pyramid is a feature pyramid module. It differs from approaches like FPNs that integrate multi-level features using lateral connections. Instead the BFP strengthens the multi-level features using the same deeply integrated balanced semantic features. The pipeline is shown in the Figure to the right. It consists of four steps, rescaling, integrating, refining and strengthening.

Features at resolution level $l$ are denoted as $C_{l}$. The number of multi-level features is denoted as $L$. The indexes of involved lowest and highest levels are denoted as $l_{min}$ and $l_{max}$. In the Figure, $C_{2}$ has the highest resolution. To integrate multi-level features and preserve their semantic hierarchy at the same time, we first resize the multi-level features {$C_{2}, C_{3}, C_{4}, C_{5}$} to an intermediate size, i.e., the same size as $C_{4}$, with interpolation and max-pooling respectively. Once the features are rescaled, the balanced semantic features are obtained by simple averaging as:

$$ C = \frac{1}{L}\sum^{l_{max}}_{l=l_{min}}C_{l} $$

The obtained features are then rescaled using the same but reverse procedure to strengthen the original features. Each resolution obtains equal information from others in this procedure. Note that this procedure does not contain any parameter. The authors observe improvement with this nonparametric method, proving the effectiveness of the information flow.

The balanced semantic features can be further refined to be more discriminative. The authors found both the refinements with convolutions directly and the non-local module work well. But the non-local module works in a more stable way. Therefore, embedded Gaussian non-local attention is utilized as default. The refining step helps us enhance the integrated features and further improve the results.

With this method, features from low-level to high-level are aggregated at the same time. The outputs {$P_{2}, P_{3}, P_{4}, P_{5}$} are used for object detection following the same pipeline in FPN.

Source: Libra R-CNN: Towards Balanced Learning for Object DetectionPapers

| Paper | Code | Results | Date | Stars |

|---|

Tasks

| Task | Papers | Share |

|---|---|---|

| Object Detection | 2 | 28.57% |

| Nutrition | 1 | 14.29% |

| Ensemble Learning | 1 | 14.29% |

| Medical Object Detection | 1 | 14.29% |

| Food recommendation | 1 | 14.29% |

| Object Localization | 1 | 14.29% |

Usage Over Time

Components

| Component | Type |

|

|---|---|---|

Balanced L1 Loss

Balanced L1 Loss

|

Loss Functions | |

Embedded Gaussian Affinity

Embedded Gaussian Affinity

|

Affinity Functions | |

Max Pooling

Max Pooling

|

Pooling Operations | |

Non-Local Block

Non-Local Block

|

Image Model Blocks |