Initialization

Initialization

SkipInit

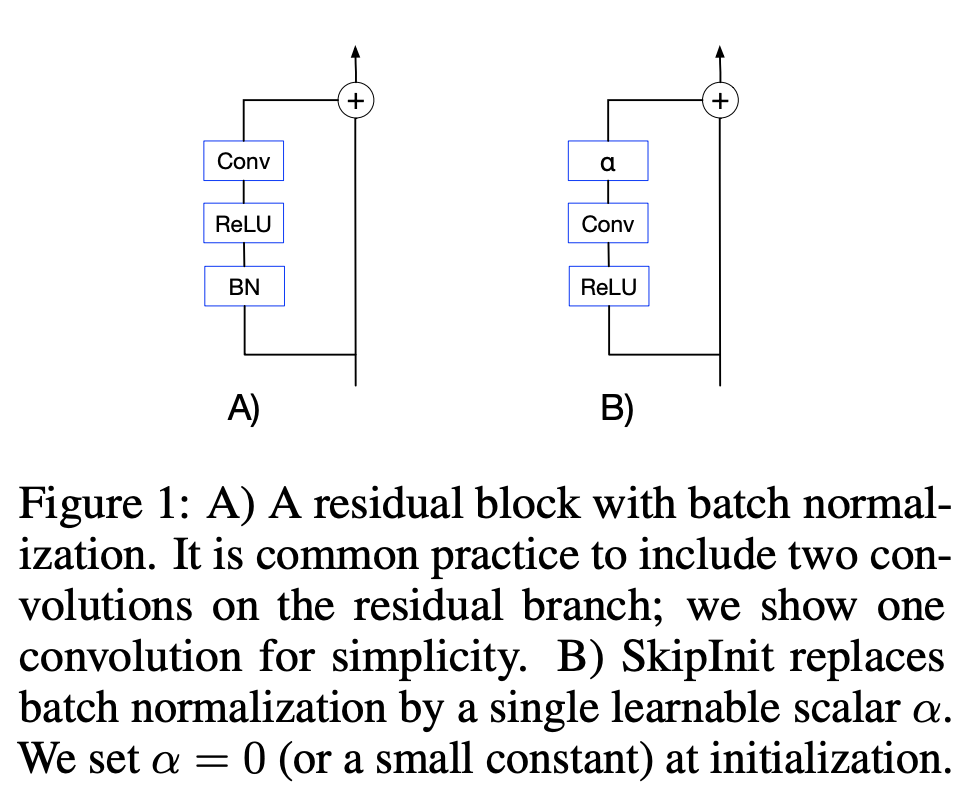

Introduced by De et al. in Batch Normalization Biases Residual Blocks Towards the Identity Function in Deep NetworksSkipInit is a method that aims to allow normalization-free training of neural networks by downscaling residual branches at initialization. This is achieved by including a learnable scalar multiplier at the end of each residual branch, initialized to $\alpha$.

The method is motivated by theoretical findings that batch normalization downscales the hidden activations on the residual branch by a factor on the order of the square root of the network depth (at initialization). Therefore, as the depth of a residual network is increased, the residual blocks are increasingly dominated by the skip connection, which drives the functions computed by residual blocks closer to the identity, preserving signal propagation and ensuring well-behaved gradients. This leads to the proposed method which can achieve this property through an initialization strategy rather than a normalization strategy.

Source: Batch Normalization Biases Residual Blocks Towards the Identity Function in Deep NetworksPapers

| Paper | Code | Results | Date | Stars |

|---|

Usage Over Time

Components

| Component | Type |

|

|---|---|---|

| 🤖 No Components Found | You can add them if they exist; e.g. Mask R-CNN uses RoIAlign |