EV-Action: Electromyography-Vision Multi-Modal Action Dataset

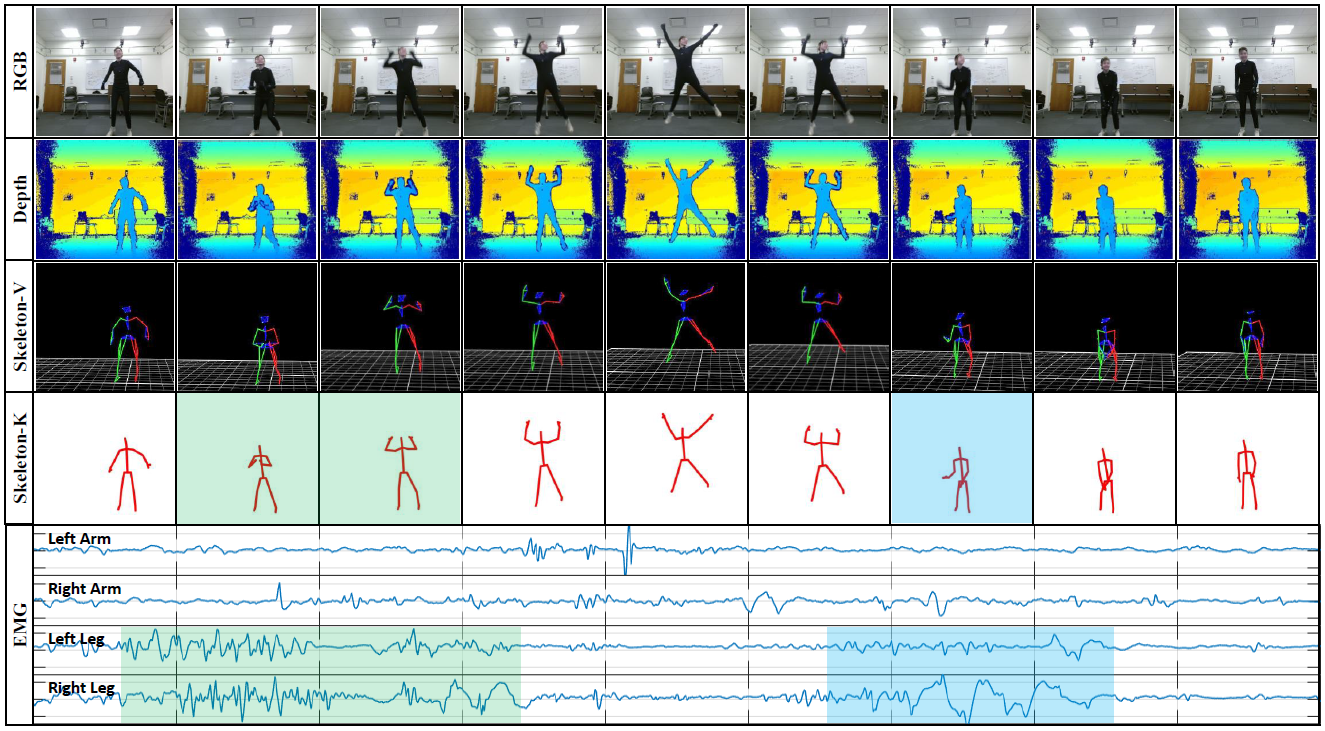

Multi-modal human action analysis is a critical and attractive research topic. However, the majority of the existing datasets only provide visual modalities (i.e., RGB, depth and skeleton). To make up this, we introduce a new, large-scale EV-Action dataset in this work, which consists of RGB, depth, electromyography (EMG), and two skeleton modalities. Compared with the conventional datasets, EV-Action dataset has two major improvements: (1) we deploy a motion capturing system to obtain high quality skeleton modality, which provides more comprehensive motion information including skeleton, trajectory, acceleration with higher accuracy, sampling frequency, and more skeleton markers. (2) we introduce an EMG modality which is usually used as an effective indicator in the biomechanics area, also it has yet to be well explored in motion related research. To the best of our knowledge, this is the first action dataset with EMG modality. The details of EV-Action dataset are clarified, meanwhile, a simple yet effective framework for EMG-based action recognition is proposed. Moreover, state-of-the-art baselines are applied to evaluate the effectiveness of all the modalities. The obtained result clearly shows the validity of EMG modality in human action analysis tasks. We hope this dataset can make significant contributions to human motion analysis, computer vision, machine learning, biomechanics, and other interdisciplinary fields.

PDF Abstract