Neural Replicator Dynamics

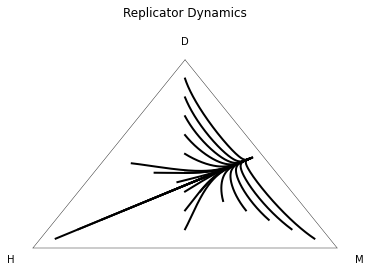

Policy gradient and actor-critic algorithms form the basis of many commonly used training techniques in deep reinforcement learning. Using these algorithms in multiagent environments poses problems such as nonstationarity and instability. In this paper, we first demonstrate that standard softmax-based policy gradient can be prone to poor performance in the presence of even the most benign nonstationarity. By contrast, it is known that the replicator dynamics, a well-studied model from evolutionary game theory, eliminates dominated strategies and exhibits convergence of the time-averaged trajectories to interior Nash equilibria in zero-sum games. Thus, using the replicator dynamics as a foundation, we derive an elegant one-line change to policy gradient methods that simply bypasses the gradient step through the softmax, yielding a new algorithm titled Neural Replicator Dynamics (NeuRD). NeuRD reduces to the exponential weights/Hedge algorithm in the single-state all-actions case. Additionally, NeuRD has formal equivalence to softmax counterfactual regret minimization, which guarantees convergence in the sequential tabular case. Importantly, our algorithm provides a straightforward way of extending the replicator dynamics to the function approximation setting. Empirical results show that NeuRD quickly adapts to nonstationarities, outperforming policy gradient significantly in both tabular and function approximation settings, when evaluated on the standard imperfect information benchmarks of Kuhn Poker, Leduc Poker, and Goofspiel.

PDF Abstract