Finding Structural Knowledge in Multimodal-BERT

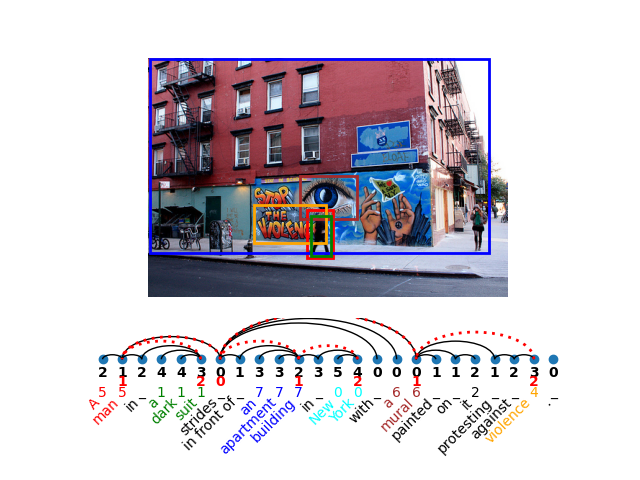

In this work, we investigate the knowledge learned in the embeddings of multimodal-BERT models. More specifically, we probe their capabilities of storing the grammatical structure of linguistic data and the structure learned over objects in visual data. To reach that goal, we first make the inherent structure of language and visuals explicit by a dependency parse of the sentences that describe the image and by the dependencies between the object regions in the image, respectively. We call this explicit visual structure the \textit{scene tree}, that is based on the dependency tree of the language description. Extensive probing experiments show that the multimodal-BERT models do not encode these scene trees.Code available at \url{https://github.com/VSJMilewski/multimodal-probes}.

PDF Abstract ACL 2022 PDF ACL 2022 Abstract

Visual Genome

Visual Genome

Flickr30k

Flickr30k