ColBERTv2: Effective and Efficient Retrieval via Lightweight Late Interaction

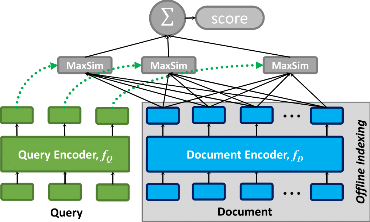

Neural information retrieval (IR) has greatly advanced search and other knowledge-intensive language tasks. While many neural IR methods encode queries and documents into single-vector representations, late interaction models produce multi-vector representations at the granularity of each token and decompose relevance modeling into scalable token-level computations. This decomposition has been shown to make late interaction more effective, but it inflates the space footprint of these models by an order of magnitude. In this work, we introduce ColBERTv2, a retriever that couples an aggressive residual compression mechanism with a denoised supervision strategy to simultaneously improve the quality and space footprint of late interaction. We evaluate ColBERTv2 across a wide range of benchmarks, establishing state-of-the-art quality within and outside the training domain while reducing the space footprint of late interaction models by 6--10$\times$.

PDF Abstract NAACL 2022 PDF NAACL 2022 Abstract

SQuAD

SQuAD

Natural Questions

Natural Questions

MS MARCO

MS MARCO

HotpotQA

HotpotQA

FEVER

FEVER

BEIR

BEIR

GooAQ

GooAQ