Spherical Latent Spaces for Stable Variational Autoencoders

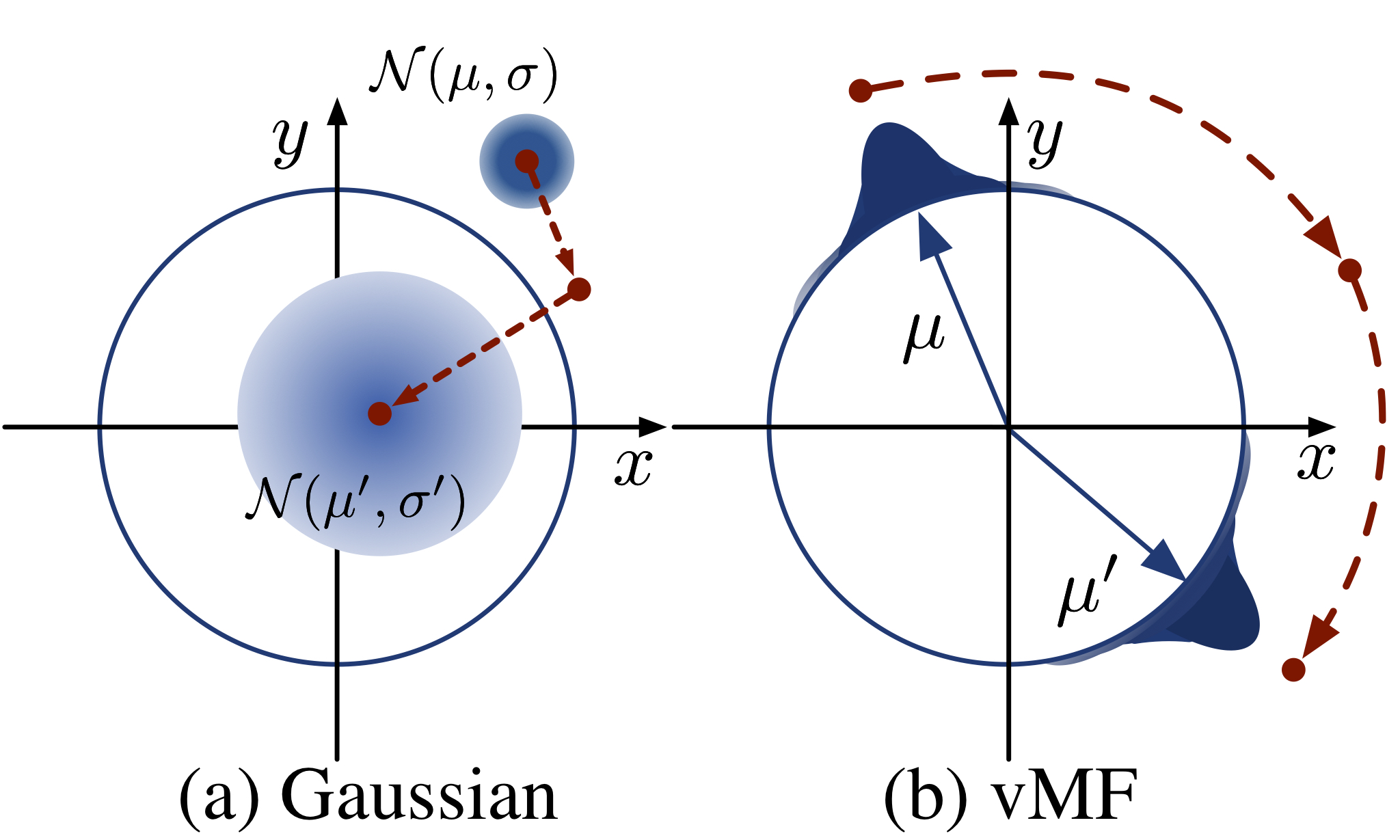

A hallmark of variational autoencoders (VAEs) for text processing is their combination of powerful encoder-decoder models, such as LSTMs, with simple latent distributions, typically multivariate Gaussians. These models pose a difficult optimization problem: there is an especially bad local optimum where the variational posterior always equals the prior and the model does not use the latent variable at all, a kind of "collapse" which is encouraged by the KL divergence term of the objective. In this work, we experiment with another choice of latent distribution, namely the von Mises-Fisher (vMF) distribution, which places mass on the surface of the unit hypersphere. With this choice of prior and posterior, the KL divergence term now only depends on the variance of the vMF distribution, giving us the ability to treat it as a fixed hyperparameter. We show that doing so not only averts the KL collapse, but consistently gives better likelihoods than Gaussians across a range of modeling conditions, including recurrent language modeling and bag-of-words document modeling. An analysis of the properties of our vMF representations shows that they learn richer and more nuanced structures in their latent representations than their Gaussian counterparts.

PDF Abstract EMNLP 2018 PDF EMNLP 2018 Abstract

Penn Treebank

Penn Treebank

AG News

AG News