A Differentiable Perceptual Audio Metric Learned from Just Noticeable Differences

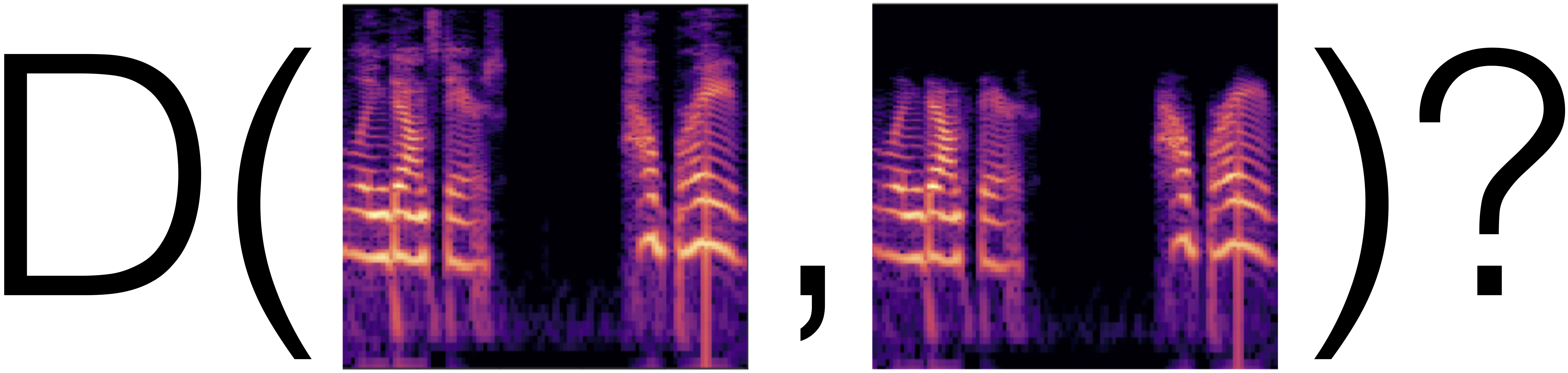

Assessment of many audio processing tasks relies on subjective evaluation which is time-consuming and expensive. Efforts have been made to create objective metrics but existing ones correlate poorly with human judgment. In this work, we construct a differentiable metric by fitting a deep neural network on a newly collected dataset of just-noticeable differences (JND), in which humans annotate whether a pair of audio clips are identical or not. By varying the type of differences, including noise, reverb, and compression artifacts, we are able to learn a metric that is well-calibrated with human judgments. Furthermore, we evaluate this metric by training a neural network, using the metric as a loss function. We find that simply replacing an existing loss with our metric yields significant improvement in denoising as measured by subjective pairwise comparison.

PDF Abstract