A Novel Evolution Strategy with Directional Gaussian Smoothing for Blackbox Optimization

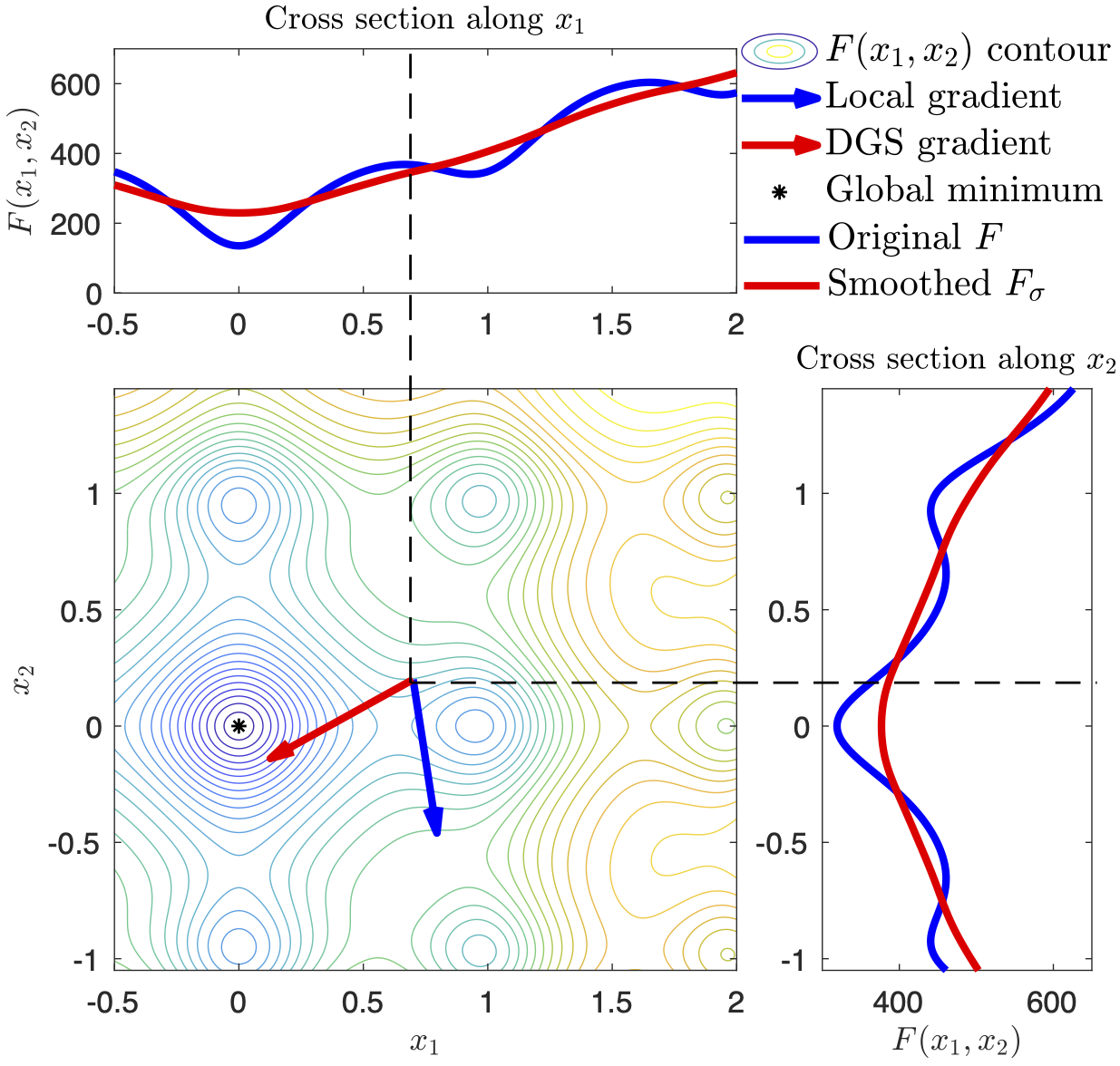

We propose an improved evolution strategy (ES) using a novel nonlocal gradient operator for high-dimensional black-box optimization. Standard ES methods with $d$-dimensional Gaussian smoothing suffer from the curse of dimensionality due to the high variance of Monte Carlo (MC) based gradient estimators. To control the variance, Gaussian smoothing is usually limited in a small region, so existing ES methods lack nonlocal exploration ability required for escaping from local minima. We develop a nonlocal gradient operator with directional Gaussian smoothing (DGS) to address this challenge. The DGS conducts 1D nonlocal explorations along $d$ orthogonal directions in $\mathbb{R}^d$, each of which defines a nonlocal directional derivative as a 1D integral. We then use Gauss-Hermite quadrature, instead of MC sampling, to estimate the $d$ 1D integrals to ensure high accuracy (i.e., small variance). Our method enables effective nonlocal exploration to facilitate the global search in high-dimensional optimization. We demonstrate the superior performance of our method in three sets of examples, including benchmark functions for global optimization, and real-world science and engineering applications.

PDF Abstract