A Survey of Evaluation Methods and Measures for Interpretable Machine Learning

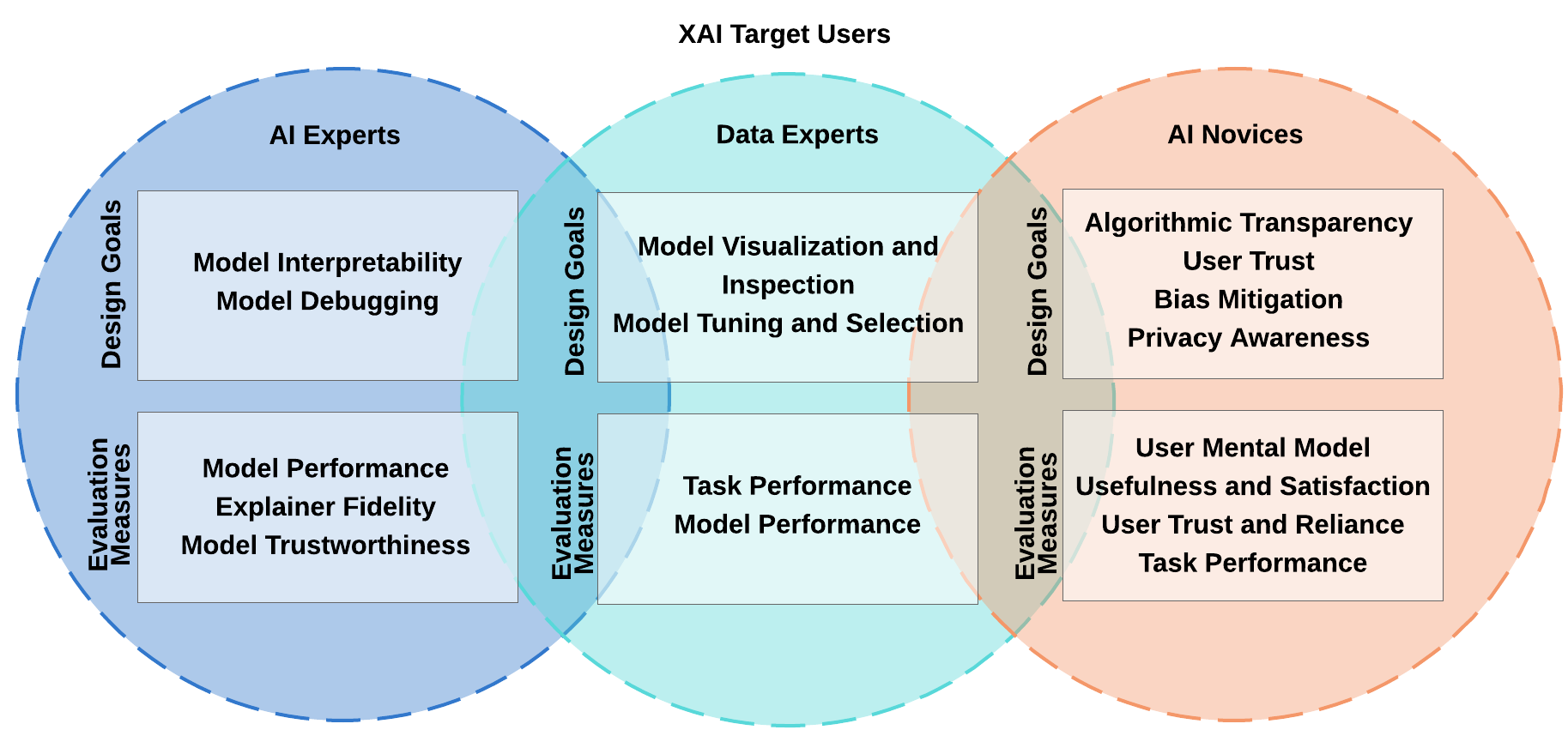

The need for interpretable and accountable intelligent system gets sensible as artificial intelligence plays more role in human life. Explainable artificial intelligence systems can be a solution by self-explaining the reasoning behind the decisions and predictions of the intelligent system. Researchers from different disciplines work together to define, design and evaluate interpretable intelligent systems for the user. Our work supports the different evaluation goals in interpretable machine learning research by a thorough review of evaluation methodologies used in machine-explanation research across the fields of human-computer interaction, visual analytics, and machine learning. We present a 2D categorization of interpretable machine learning evaluation methods and show a mapping between user groups and evaluation measures. Further, we address the essential factors and steps for a right evaluation plan by proposing a nested model for design and evaluation of explainable artificial intelligence systems.

PDF Abstract