Adversarial Momentum-Contrastive Pre-Training

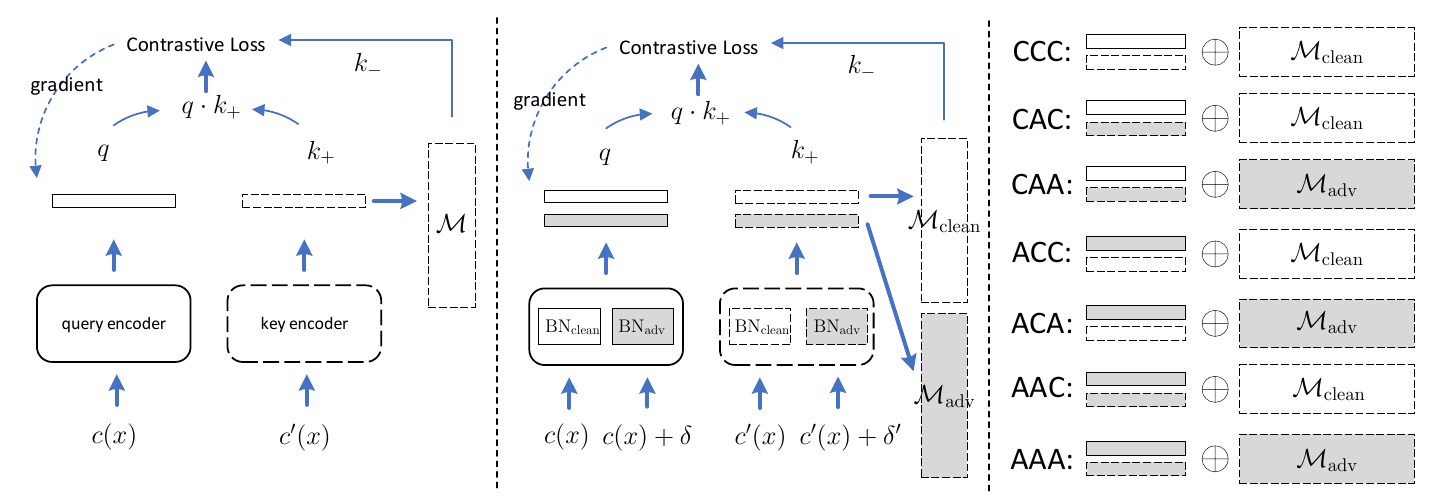

Recently proposed adversarial self-supervised learning methods usually require big batches and long training epochs to extract robust features, which will bring heavy computational overhead on platforms with limited resources. In order to help the network learn more powerful feature representations in smaller batches and fewer epochs, this paper proposes a novel adversarial momentum contrastive learning method, which introduces two memory banks corresponding to clean samples and adversarial samples, respectively. These memory banks can be dynamically incorporated into the training process to track invariant features among historical mini-batches. Compared with the previous adversarial pre-training model, our method achieves superior performance with smaller batch size and less training epochs. In addition, the model outperforms some state-of-the-art supervised defensive methods on multiple benchmark datasets after being fine-tuned on downstream classification tasks.

PDF Abstract

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100