Attribute-guided image generation from layout

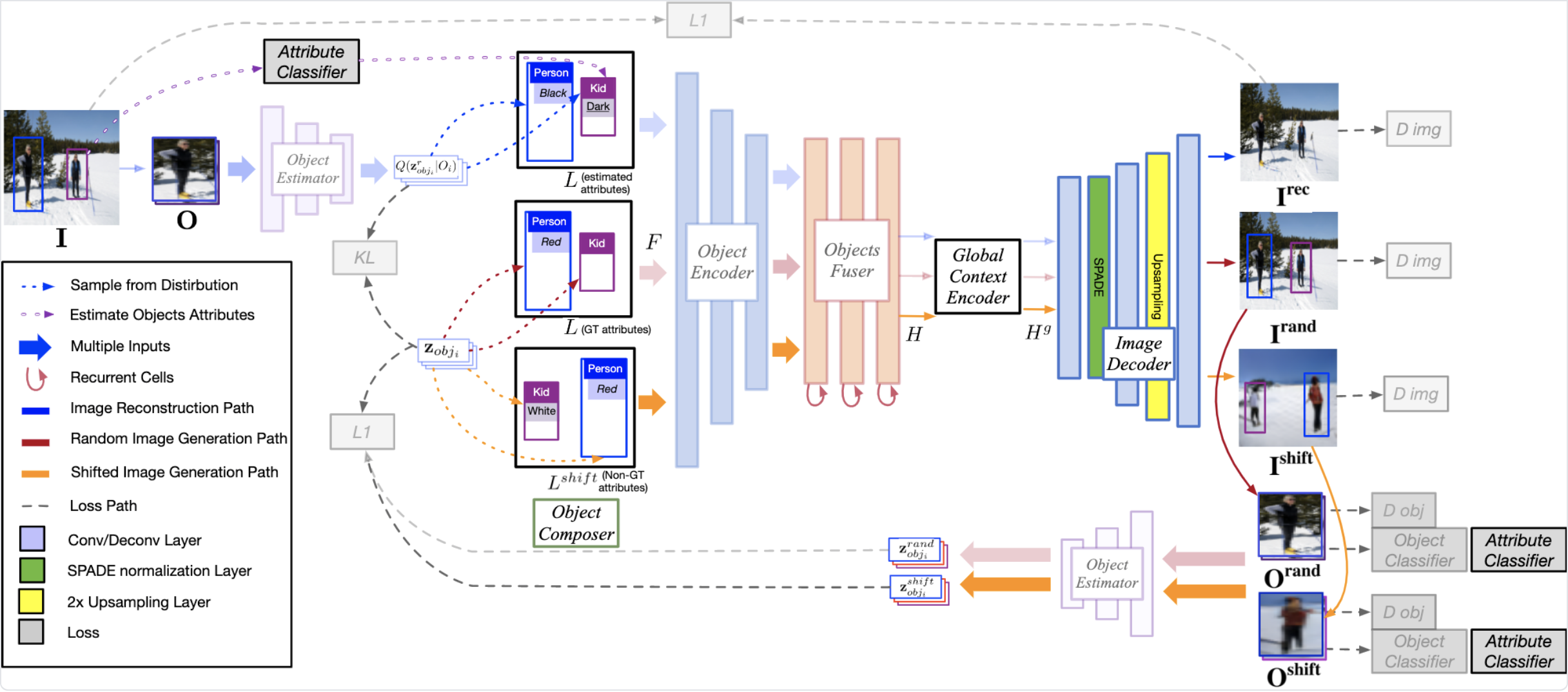

Recent approaches have achieved great success in image generation from structured inputs, e.g., semantic segmentation, scene graph or layout. Although these methods allow specification of objects and their locations at image-level, they lack the fidelity and semantic control to specify visual appearance of these objects at an instance-level. To address this limitation, we propose a new image generation method that enables instance-level attribute control. Specifically, the input to our attribute-guided generative model is a tuple that contains: (1) object bounding boxes, (2) object categories and (3) an (optional) set of attributes for each object. The output is a generated image where the requested objects are in the desired locations and have prescribed attributes. Several losses work collaboratively to encourage accurate, consistent and diverse image generation. Experiments on Visual Genome dataset demonstrate our model's capacity to control object-level attributes in generated images, and validate plausibility of disentangled object-attribute representation in the image generation from layout task. Also, the generated images from our model have higher resolution, object classification accuracy and consistency, as compared to the previous state-of-the-art.

PDF Abstract

Visual Genome

Visual Genome