BABEL: Bodies, Action and Behavior with English Labels

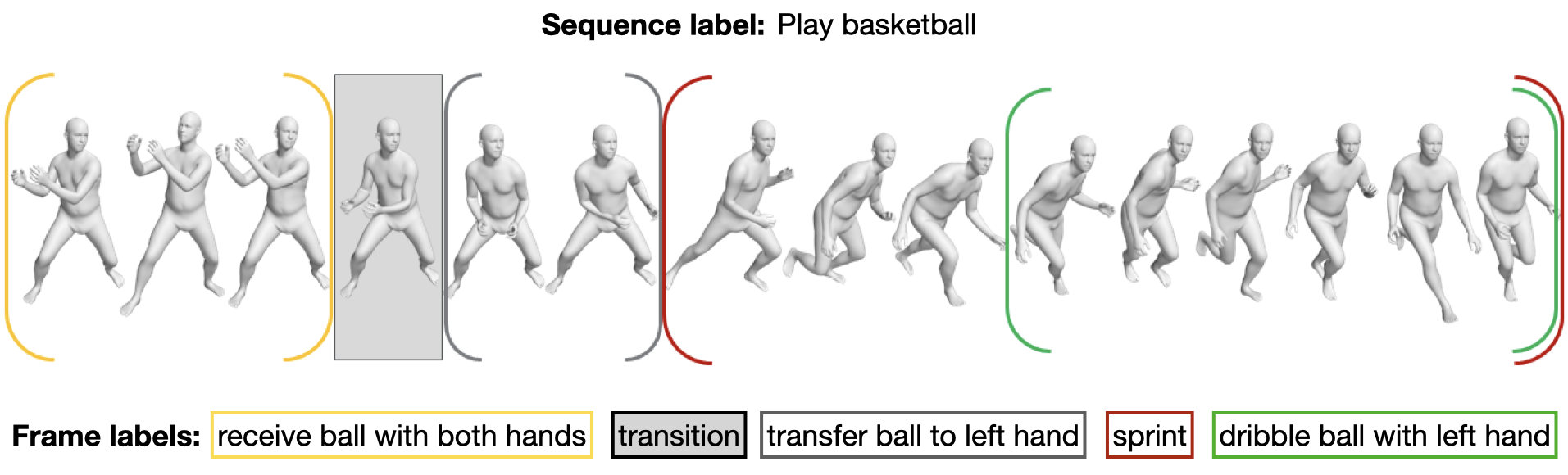

Understanding the semantics of human movement -- the what, how and why of the movement -- is an important problem that requires datasets of human actions with semantic labels. Existing datasets take one of two approaches. Large-scale video datasets contain many action labels but do not contain ground-truth 3D human motion. Alternatively, motion-capture (mocap) datasets have precise body motions but are limited to a small number of actions. To address this, we present BABEL, a large dataset with language labels describing the actions being performed in mocap sequences. BABEL consists of action labels for about 43 hours of mocap sequences from AMASS. Action labels are at two levels of abstraction -- sequence labels describe the overall action in the sequence, and frame labels describe all actions in every frame of the sequence. Each frame label is precisely aligned with the duration of the corresponding action in the mocap sequence, and multiple actions can overlap. There are over 28k sequence labels, and 63k frame labels in BABEL, which belong to over 250 unique action categories. Labels from BABEL can be leveraged for tasks like action recognition, temporal action localization, motion synthesis, etc. To demonstrate the value of BABEL as a benchmark, we evaluate the performance of models on 3D action recognition. We demonstrate that BABEL poses interesting learning challenges that are applicable to real-world scenarios, and can serve as a useful benchmark of progress in 3D action recognition. The dataset, baseline method, and evaluation code is made available, and supported for academic research purposes at https://babel.is.tue.mpg.de/.

PDF Abstract CVPR 2021 PDF CVPR 2021 Abstract

BABEL

BABEL

Human3.6M

Human3.6M

NTU RGB+D

NTU RGB+D

HACS

HACS

HumanAct12

HumanAct12

MoVi

MoVi