CascadePSP: Toward Class-Agnostic and Very High-Resolution Segmentation via Global and Local Refinement

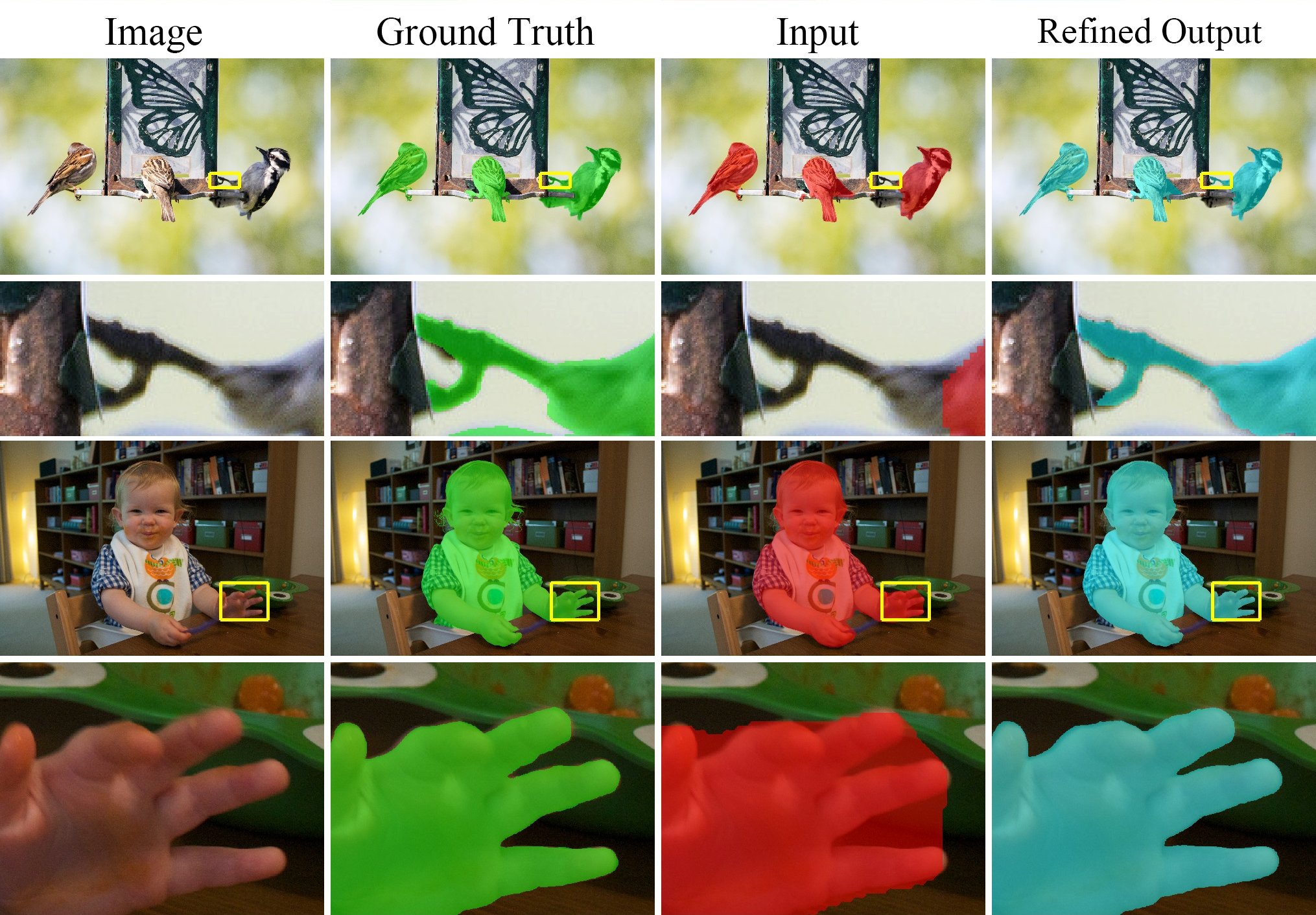

State-of-the-art semantic segmentation methods were almost exclusively trained on images within a fixed resolution range. These segmentations are inaccurate for very high-resolution images since using bicubic upsampling of low-resolution segmentation does not adequately capture high-resolution details along object boundaries. In this paper, we propose a novel approach to address the high-resolution segmentation problem without using any high-resolution training data. The key insight is our CascadePSP network which refines and corrects local boundaries whenever possible. Although our network is trained with low-resolution segmentation data, our method is applicable to any resolution even for very high-resolution images larger than 4K. We present quantitative and qualitative studies on different datasets to show that CascadePSP can reveal pixel-accurate segmentation boundaries using our novel refinement module without any finetuning. Thus, our method can be regarded as class-agnostic. Finally, we demonstrate the application of our model to scene parsing in multi-class segmentation.

PDF Abstract CVPR 2020 PDF CVPR 2020 AbstractResults from the Paper

Ranked #1 on

Semantic Segmentation

on BIG

(using extra training data)

Ranked #1 on

Semantic Segmentation

on BIG

(using extra training data)

BIG

BIG

ADE20K

ADE20K

DeepGlobe

DeepGlobe