Training a Resilient Q-Network against Observational Interference

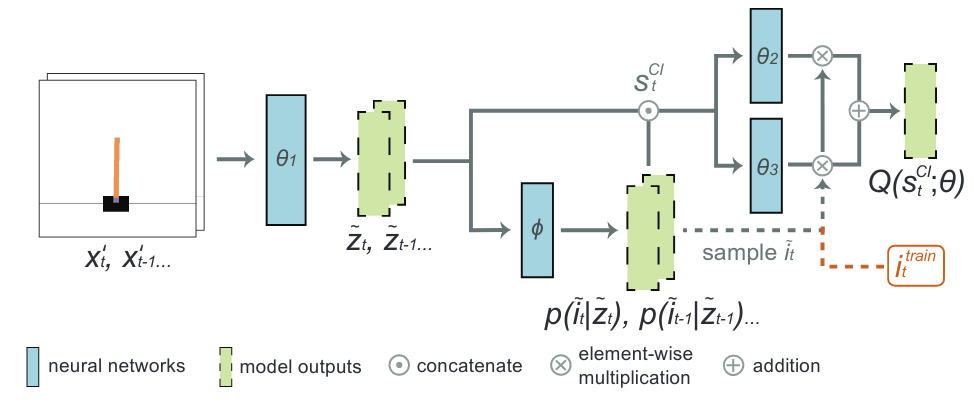

Deep reinforcement learning (DRL) has demonstrated impressive performance in various gaming simulators and real-world applications. In practice, however, a DRL agent may receive faulty observation by abrupt interferences such as black-out, frozen-screen, and adversarial perturbation. How to design a resilient DRL algorithm against these rare but mission-critical and safety-crucial scenarios is an essential yet challenging task. In this paper, we consider a deep q-network (DQN) framework training with an auxiliary task of observational interferences such as artificial noises. Inspired by causal inference for observational interference, we propose a causal inference based DQN algorithm called causal inference Q-network (CIQ). We evaluate the performance of CIQ in several benchmark DQN environments with different types of interferences as auxiliary labels. Our experimental results show that the proposed CIQ method could achieve higher performance and more resilience against observational interferences.

PDF Abstract

OpenAI Gym

OpenAI Gym