Closed-Loop Data Transcription to an LDR via Minimaxing Rate Reduction

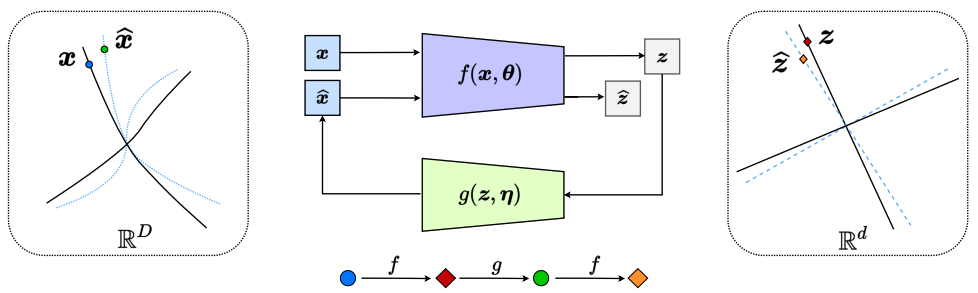

This work proposes a new computational framework for learning a structured generative model for real-world datasets. In particular, we propose to learn a closed-loop transcription between a multi-class multi-dimensional data distribution and a linear discriminative representation (LDR) in the feature space that consists of multiple independent multi-dimensional linear subspaces. In particular, we argue that the optimal encoding and decoding mappings sought can be formulated as the equilibrium point of a two-player minimax game between the encoder and decoder. A natural utility function for this game is the so-called rate reduction, a simple information-theoretic measure for distances between mixtures of subspace-like Gaussians in the feature space. Our formulation draws inspiration from closed-loop error feedback from control systems and avoids expensive evaluating and minimizing approximated distances between arbitrary distributions in either the data space or the feature space. To a large extent, this new formulation unifies the concepts and benefits of Auto-Encoding and GAN and naturally extends them to the settings of learning a both discriminative and generative representation for multi-class and multi-dimensional real-world data. Our extensive experiments on many benchmark imagery datasets demonstrate tremendous potential of this new closed-loop formulation: under fair comparison, visual quality of the learned decoder and classification performance of the encoder is competitive and often better than existing methods based on GAN, VAE, or a combination of both. Unlike existing generative models, the so learned features of the multiple classes are structured: different classes are explicitly mapped onto corresponding independent principal subspaces in the feature space. Source code can be found at https://github.com/Delay-Xili/LDR.

PDF Abstract

CIFAR-10

CIFAR-10

MNIST

MNIST

CelebA

CelebA

STL-10

STL-10

LSUN

LSUN