Co-occurrence Feature Learning from Skeleton Data for Action Recognition and Detection with Hierarchical Aggregation

Skeleton-based human action recognition has recently drawn increasing attentions with the availability of large-scale skeleton datasets. The most crucial factors for this task lie in two aspects: the intra-frame representation for joint co-occurrences and the inter-frame representation for skeletons' temporal evolutions. In this paper we propose an end-to-end convolutional co-occurrence feature learning framework. The co-occurrence features are learned with a hierarchical methodology, in which different levels of contextual information are aggregated gradually. Firstly point-level information of each joint is encoded independently. Then they are assembled into semantic representation in both spatial and temporal domains. Specifically, we introduce a global spatial aggregation scheme, which is able to learn superior joint co-occurrence features over local aggregation. Besides, raw skeleton coordinates as well as their temporal difference are integrated with a two-stream paradigm. Experiments show that our approach consistently outperforms other state-of-the-arts on action recognition and detection benchmarks like NTU RGB+D, SBU Kinect Interaction and PKU-MMD.

PDF Abstract

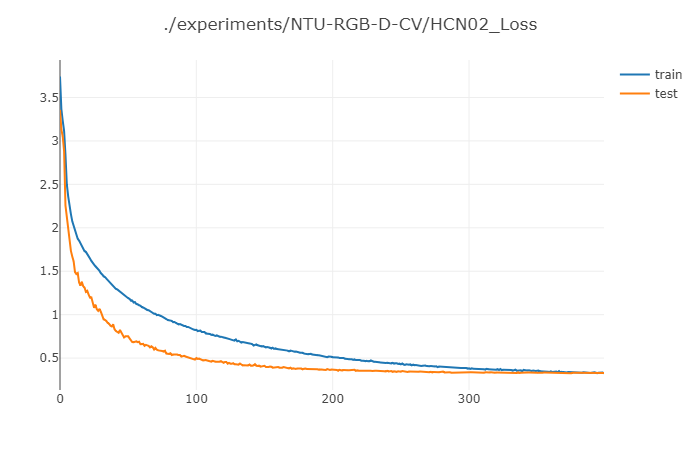

NTU RGB+D

NTU RGB+D

SBU

SBU

PKU-MMD

PKU-MMD