Compressing Features for Learning with Noisy Labels

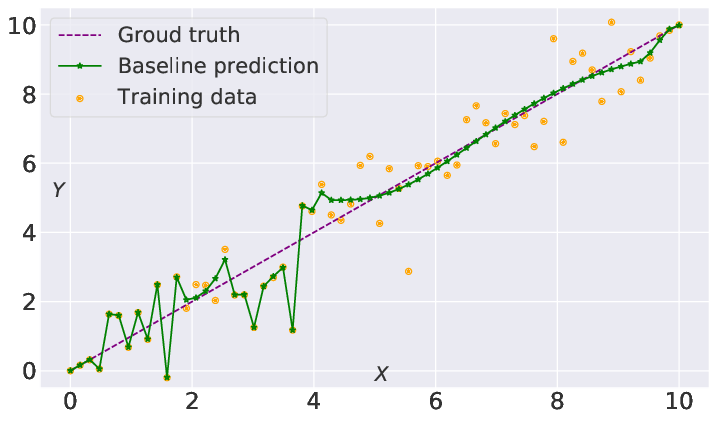

Supervised learning can be viewed as distilling relevant information from input data into feature representations. This process becomes difficult when supervision is noisy as the distilled information might not be relevant. In fact, recent research shows that networks can easily overfit all labels including those that are corrupted, and hence can hardly generalize to clean datasets. In this paper, we focus on the problem of learning with noisy labels and introduce compression inductive bias to network architectures to alleviate this over-fitting problem. More precisely, we revisit one classical regularization named Dropout and its variant Nested Dropout. Dropout can serve as a compression constraint for its feature dropping mechanism, while Nested Dropout further learns ordered feature representations w.r.t. feature importance. Moreover, the trained models with compression regularization are further combined with Co-teaching for performance boost. Theoretically, we conduct bias-variance decomposition of the objective function under compression regularization. We analyze it for both single model and Co-teaching. This decomposition provides three insights: (i) it shows that over-fitting is indeed an issue for learning with noisy labels; (ii) through an information bottleneck formulation, it explains why the proposed feature compression helps in combating label noise; (iii) it gives explanations on the performance boost brought by incorporating compression regularization into Co-teaching. Experiments show that our simple approach can have comparable or even better performance than the state-of-the-art methods on benchmarks with real-world label noise including Clothing1M and ANIMAL-10N. Our implementation is available at https://yingyichen-cyy.github.io/CompressFeatNoisyLabels/.

PDF AbstractDatasets

Results from the Paper

Ranked #10 on

Image Classification

on Clothing1M

(using extra training data)

Ranked #10 on

Image Classification

on Clothing1M

(using extra training data)

CIFAR-10

CIFAR-10

ImageNet

ImageNet

CIFAR-100

CIFAR-100

Clothing1M

Clothing1M

ANIMAL

ANIMAL