Contrastive Learning Improves Model Robustness Under Label Noise

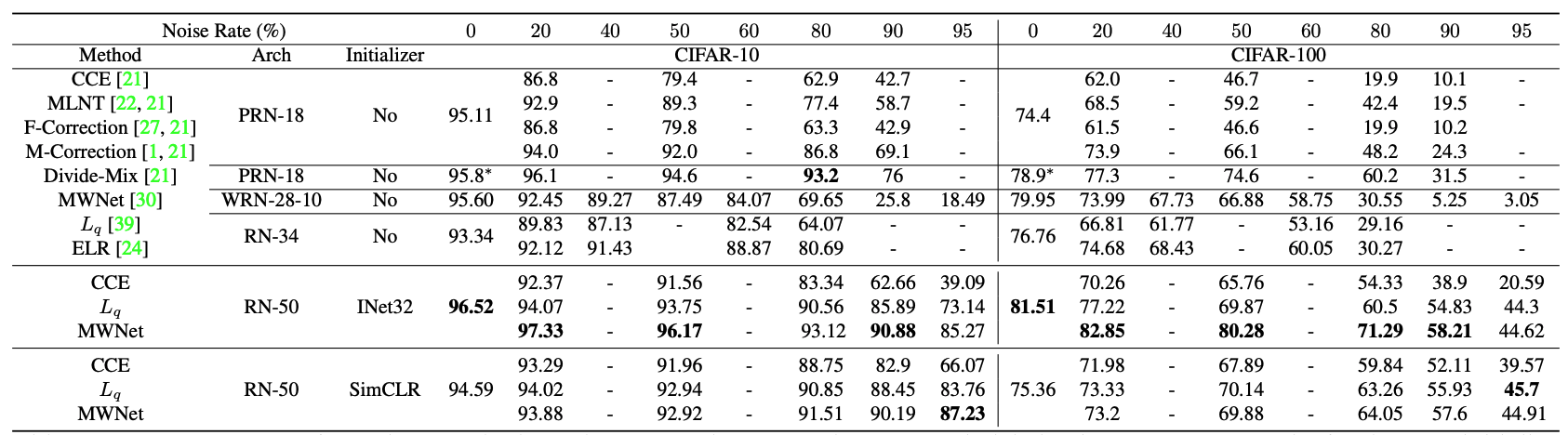

Deep neural network-based classifiers trained with the categorical cross-entropy (CCE) loss are sensitive to label noise in the training data. One common type of method that can mitigate the impact of label noise can be viewed as supervised robust methods; one can simply replace the CCE loss with a loss that is robust to label noise, or re-weight training samples and down-weight those with higher loss values. Recently, another type of method using semi-supervised learning (SSL) has been proposed, which augments these supervised robust methods to exploit (possibly) noisy samples more effectively. Although supervised robust methods perform well across different data types, they have been shown to be inferior to the SSL methods on image classification tasks under label noise. Therefore, it remains to be seen that whether these supervised robust methods can also perform well if they can utilize the unlabeled samples more effectively. In this paper, we show that by initializing supervised robust methods using representations learned through contrastive learning leads to significantly improved performance under label noise. Surprisingly, even the simplest method (training a classifier with the CCE loss) can outperform the state-of-the-art SSL method by more than 50\% under high label noise when initialized with contrastive learning. Our implementation will be publicly available at {\url{https://github.com/arghosh/noisy_label_pretrain}}.

PDF Abstract

Clothing1M

Clothing1M