Critical Thinking for Language Models

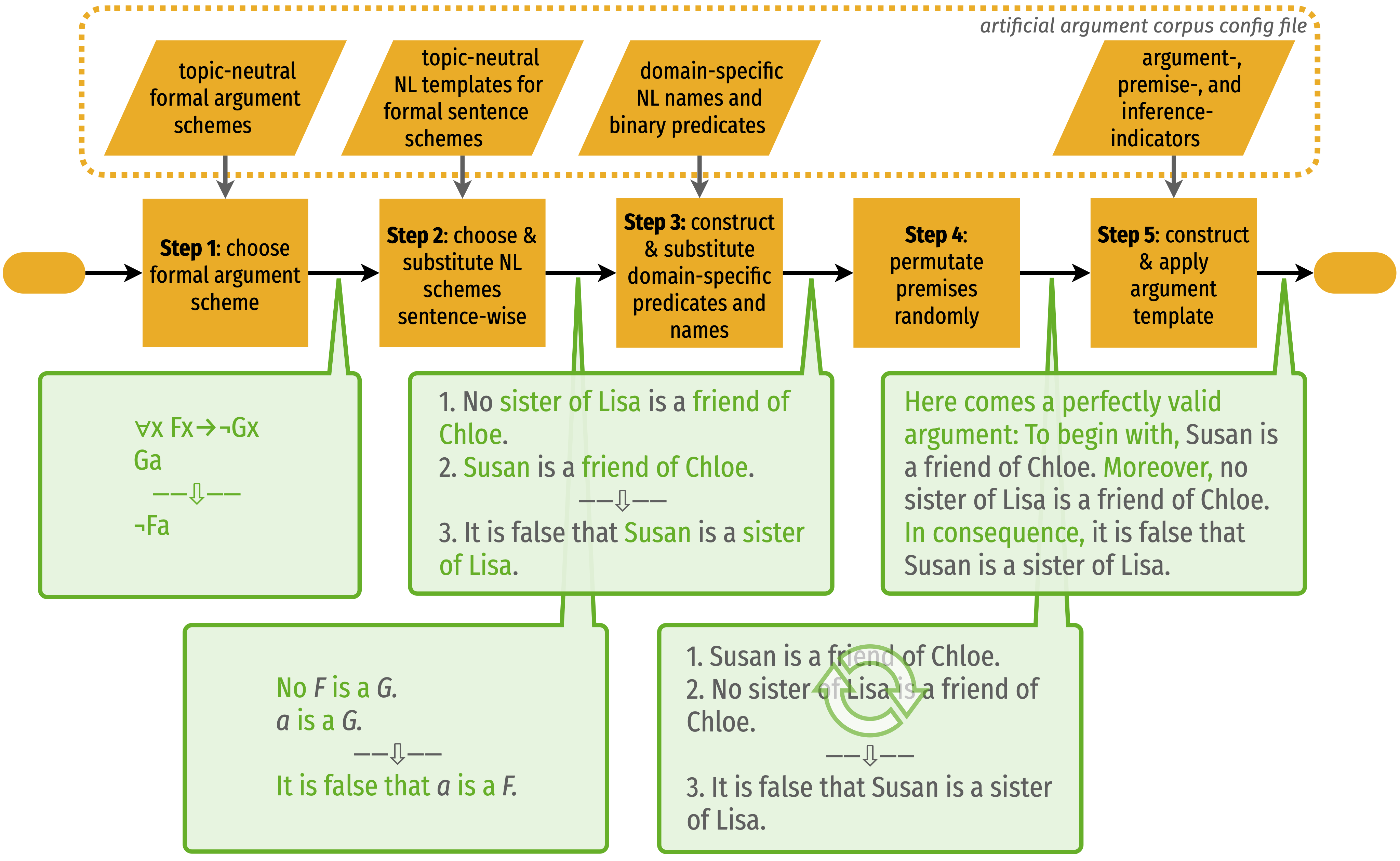

This paper takes a first step towards a critical thinking curriculum for neural auto-regressive language models. We introduce a synthetic corpus of deductively valid arguments, and generate artificial argumentative texts to train and evaluate GPT-2. Significant transfer learning effects can be observed: Training a model on three simple core schemes allows it to accurately complete conclusions of different, and more complex types of arguments, too. The language models generalize the core argument schemes in a correct way. Moreover, we obtain consistent and promising results for NLU benchmarks. In particular, pre-training on the argument schemes raises zero-shot accuracy on the GLUE diagnostics by up to 15 percentage points. The findings suggest that intermediary pre-training on texts that exemplify basic reasoning abilities (such as typically covered in critical thinking textbooks) might help language models to acquire a broad range of reasoning skills. The synthetic argumentative texts presented in this paper are a promising starting point for building such a "critical thinking curriculum for language models."

PDF Abstract IWCS (ACL) 2021 PDF IWCS (ACL) 2021 Abstract

GLUE

GLUE

SNLI

SNLI

LogiQA

LogiQA