Deep Anomaly Detection with Outlier Exposure

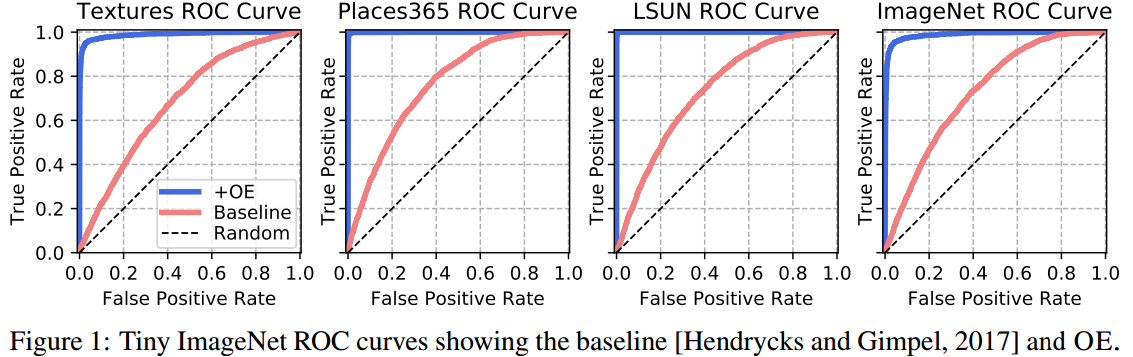

It is important to detect anomalous inputs when deploying machine learning systems. The use of larger and more complex inputs in deep learning magnifies the difficulty of distinguishing between anomalous and in-distribution examples. At the same time, diverse image and text data are available in enormous quantities. We propose leveraging these data to improve deep anomaly detection by training anomaly detectors against an auxiliary dataset of outliers, an approach we call Outlier Exposure (OE). This enables anomaly detectors to generalize and detect unseen anomalies. In extensive experiments on natural language processing and small- and large-scale vision tasks, we find that Outlier Exposure significantly improves detection performance. We also observe that cutting-edge generative models trained on CIFAR-10 may assign higher likelihoods to SVHN images than to CIFAR-10 images; we use OE to mitigate this issue. We also analyze the flexibility and robustness of Outlier Exposure, and identify characteristics of the auxiliary dataset that improve performance.

PDF Abstract ICLR 2019 PDF ICLR 2019 AbstractCode

Datasets

Results from the Paper

Ranked #3 on

Out-of-Distribution Detection

on CIFAR-100

(using extra training data)

Ranked #3 on

Out-of-Distribution Detection

on CIFAR-100

(using extra training data)

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100

SVHN

SVHN

SST

SST

Places

Places

Tiny ImageNet

Tiny ImageNet

Tiny Images

Tiny Images