Improved Universal Sentence Embeddings with Prompt-based Contrastive Learning and Energy-based Learning

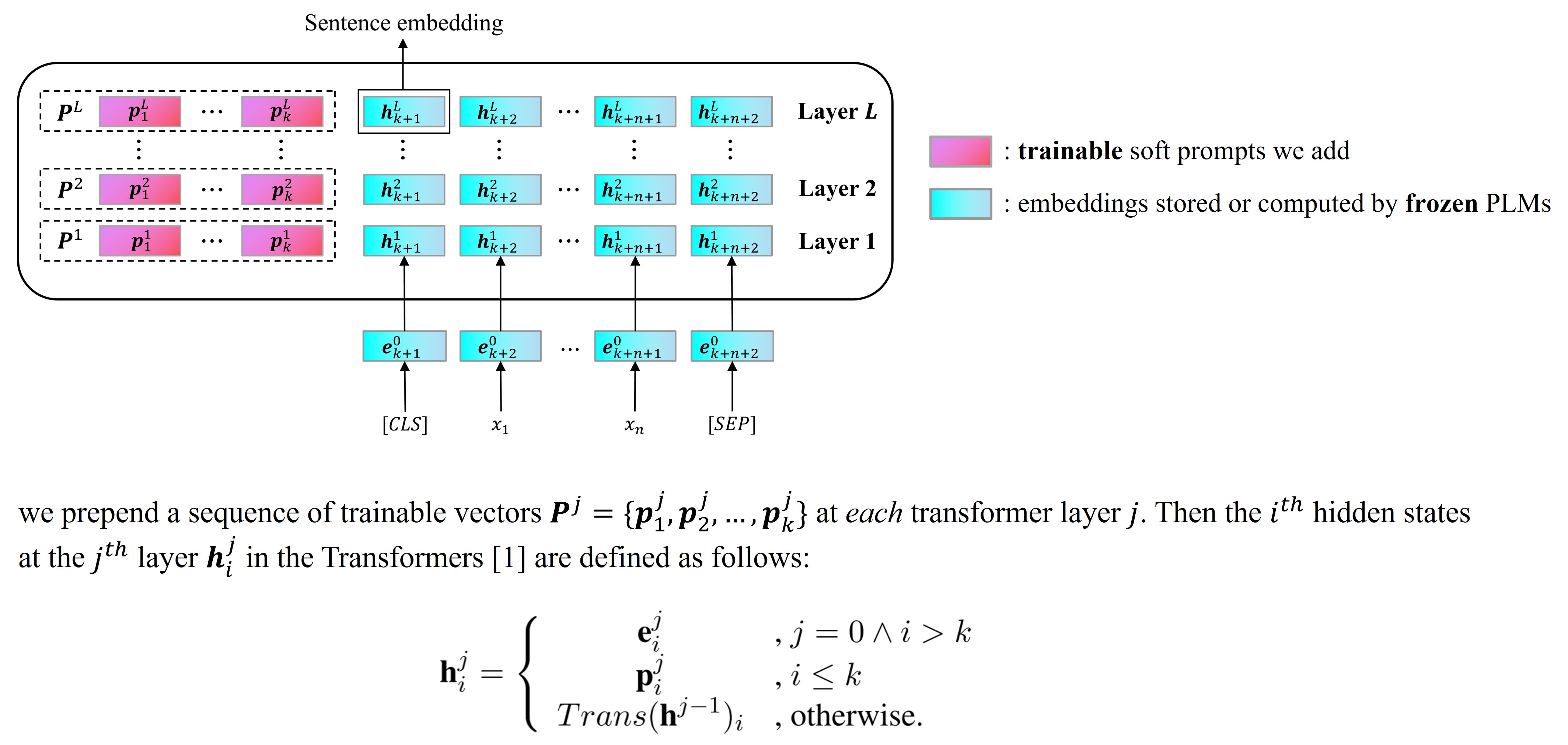

Contrastive learning has been demonstrated to be effective in enhancing pre-trained language models (PLMs) to derive superior universal sentence embeddings. However, existing contrastive methods still have two limitations. Firstly, previous works may acquire poor performance under domain shift settings, thus hindering the application of sentence representations in practice. We attribute this low performance to the over-parameterization of PLMs with millions of parameters. To alleviate it, we propose PromCSE (Prompt-based Contrastive Learning for Sentence Embeddings), which only trains small-scale \emph{Soft Prompt} (i.e., a set of trainable vectors) while keeping PLMs fixed. Secondly, the commonly used NT-Xent loss function of contrastive learning does not fully exploit hard negatives in supervised learning settings. To this end, we propose to integrate an Energy-based Hinge loss to enhance the pairwise discriminative power, inspired by the connection between the NT-Xent loss and the Energy-based Learning paradigm. Empirical results on seven standard semantic textual similarity (STS) tasks and a domain-shifted STS task both show the effectiveness of our method compared with the current state-of-the-art sentence embedding models. Our code is publicly avaliable at https://github.com/YJiangcm/PromCSE

PDF Abstract

SICK

SICK

CxC

CxC