Deep Semi-supervised Knowledge Distillation for Overlapping Cervical Cell Instance Segmentation

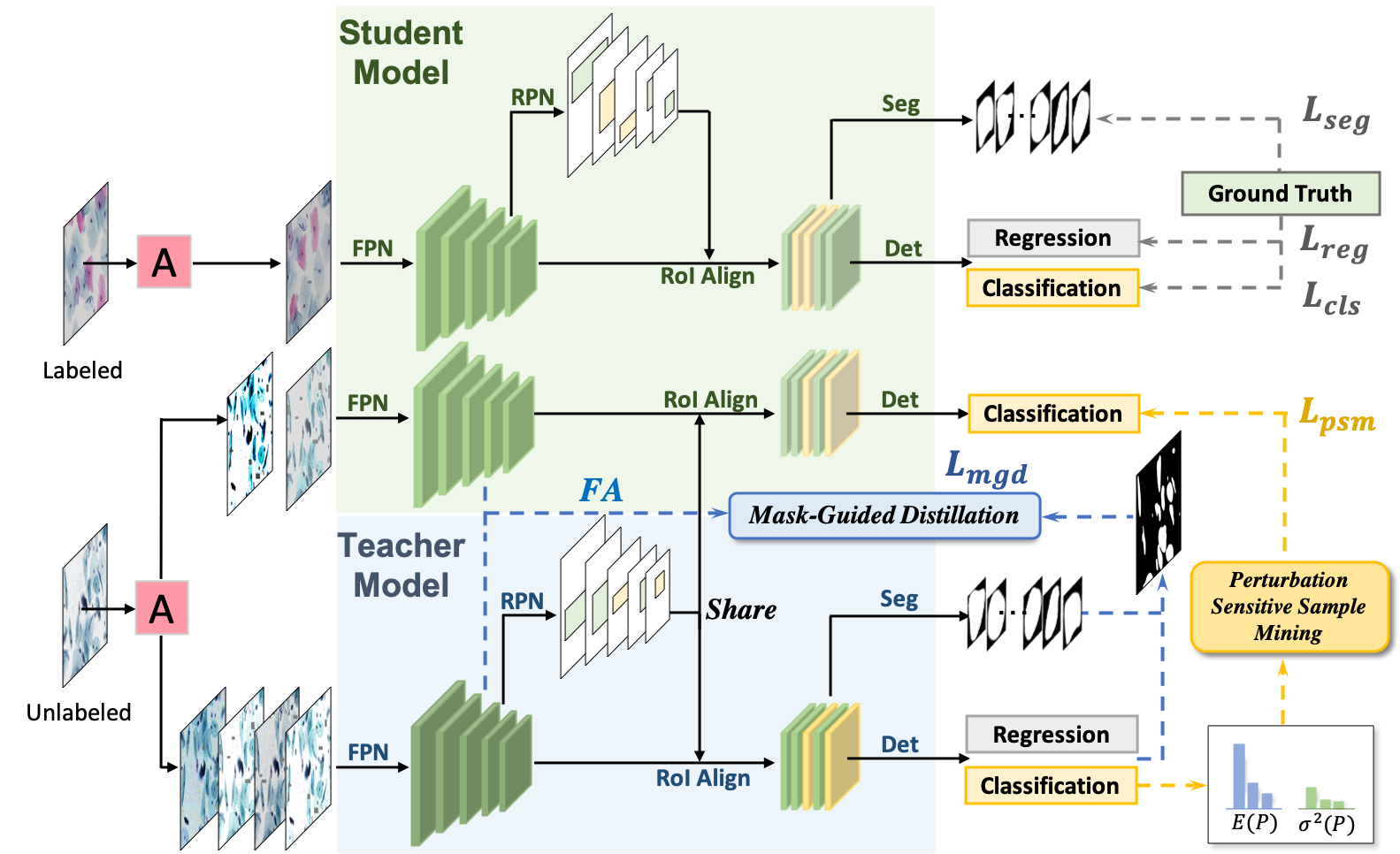

Deep learning methods show promising results for overlapping cervical cell instance segmentation. However, in order to train a model with good generalization ability, voluminous pixel-level annotations are demanded which is quite expensive and time-consuming for acquisition. In this paper, we propose to leverage both labeled and unlabeled data for instance segmentation with improved accuracy by knowledge distillation. We propose a novel Mask-guided Mean Teacher framework with Perturbation-sensitive Sample Mining (MMT-PSM), which consists of a teacher and a student network during training. Two networks are encouraged to be consistent both in feature and semantic level under small perturbations. The teacher's self-ensemble predictions from $K$-time augmented samples are used to construct the reliable pseudo-labels for optimizing the student. We design a novel strategy to estimate the sensitivity to perturbations for each proposal and select informative samples from massive cases to facilitate fast and effective semantic distillation. In addition, to eliminate the unavoidable noise from the background region, we propose to use the predicted segmentation mask as guidance to enforce the feature distillation in the foreground region. Experiments show that the proposed method improves the performance significantly compared with the supervised method learned from labeled data only, and outperforms state-of-the-art semi-supervised methods.

PDF Abstract