Deep Sketch-guided Cartoon Video Inbetweening

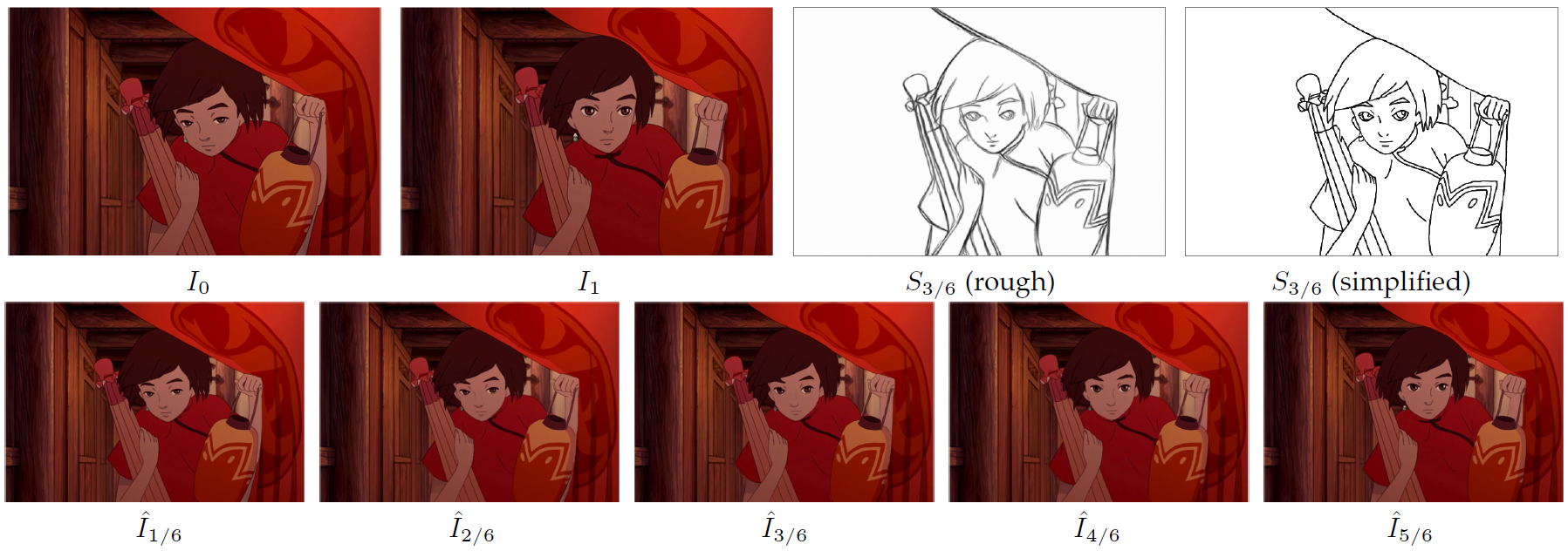

We propose a novel framework to produce cartoon videos by fetching the color information from two input keyframes while following the animated motion guided by a user sketch. The key idea of the proposed approach is to estimate the dense cross-domain correspondence between the sketch and cartoon video frames, and employ a blending module with occlusion estimation to synthesize the middle frame guided by the sketch. After that, the input frames and the synthetic frame equipped with established correspondence are fed into an arbitrary-time frame interpolation pipeline to generate and refine additional inbetween frames. Finally, a module to preserve temporal consistency is employed. Compared to common frame interpolation methods, our approach can address frames with relatively large motion and also has the flexibility to enable users to control the generated video sequences by editing the sketch guidance. By explicitly considering the correspondence between frames and the sketch, we can achieve higher quality results than other image synthesis methods. Our results show that our system generalizes well to different movie frames, achieving better results than existing solutions.

PDF Abstract

Sketch

Sketch