DeepV2D: Video to Depth with Differentiable Structure from Motion

We propose DeepV2D, an end-to-end deep learning architecture for predicting depth from video. DeepV2D combines the representation ability of neural networks with the geometric principles governing image formation. We compose a collection of classical geometric algorithms, which are converted into trainable modules and combined into an end-to-end differentiable architecture. DeepV2D interleaves two stages: motion estimation and depth estimation. During inference, motion and depth estimation are alternated and converge to accurate depth. Code is available https://github.com/princeton-vl/DeepV2D.

PDF Abstract ICLR 2020 PDF ICLR 2020 Abstract

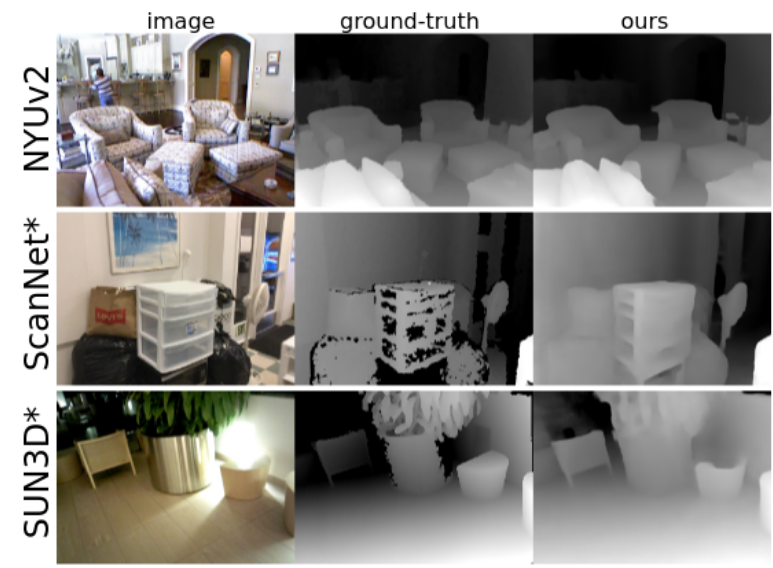

ScanNet

ScanNet

SUN3D

SUN3D