Dense Relational Image Captioning via Multi-task Triple-Stream Networks

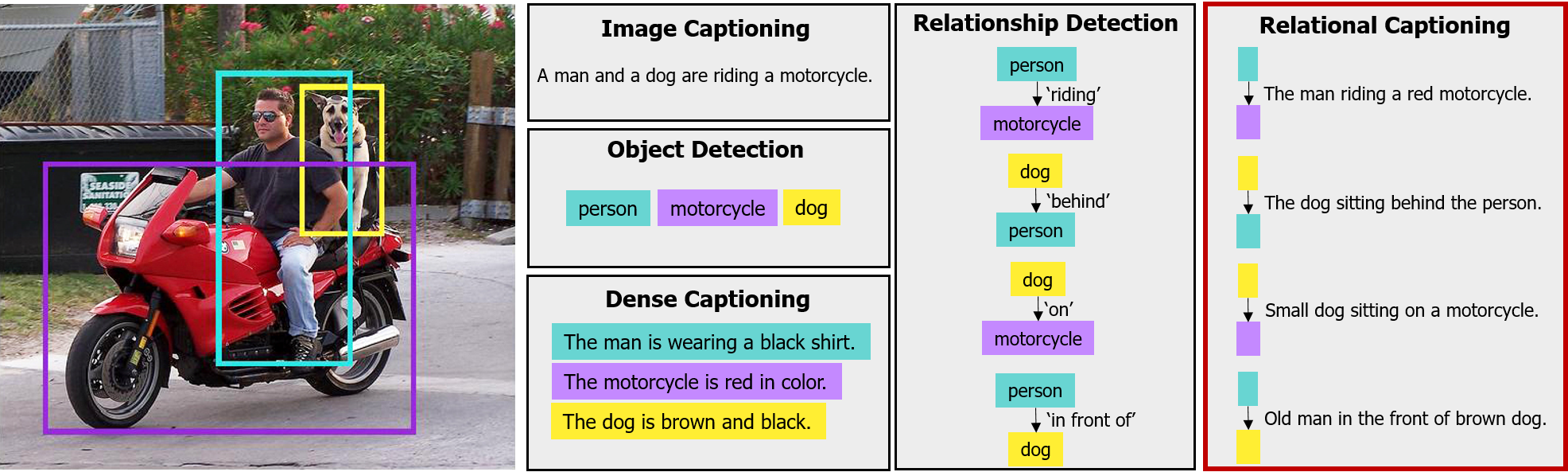

We introduce dense relational captioning, a novel image captioning task which aims to generate multiple captions with respect to relational information between objects in a visual scene. Relational captioning provides explicit descriptions for each relationship between object combinations. This framework is advantageous in both diversity and amount of information, leading to a comprehensive image understanding based on relationships, e.g., relational proposal generation. For relational understanding between objects, the part-of-speech (POS; i.e., subject-object-predicate categories) can be a valuable prior information to guide the causal sequence of words in a caption. We enforce our framework to learn not only to generate captions but also to understand the POS of each word. To this end, we propose the multi-task triple-stream network (MTTSNet) which consists of three recurrent units responsible for each POS which is trained by jointly predicting the correct captions and POS for each word. In addition, we found that the performance of MTTSNet can be improved by modulating the object embeddings with an explicit relational module. We demonstrate that our proposed model can generate more diverse and richer captions, via extensive experimental analysis on large scale datasets and several metrics. Then, we present applications of our framework to holistic image captioning, scene graph generation, and retrieval tasks.

PDF Abstract

Visual Genome

Visual Genome

VRD

VRD