Deriving pairwise transfer entropy from network structure and motifs

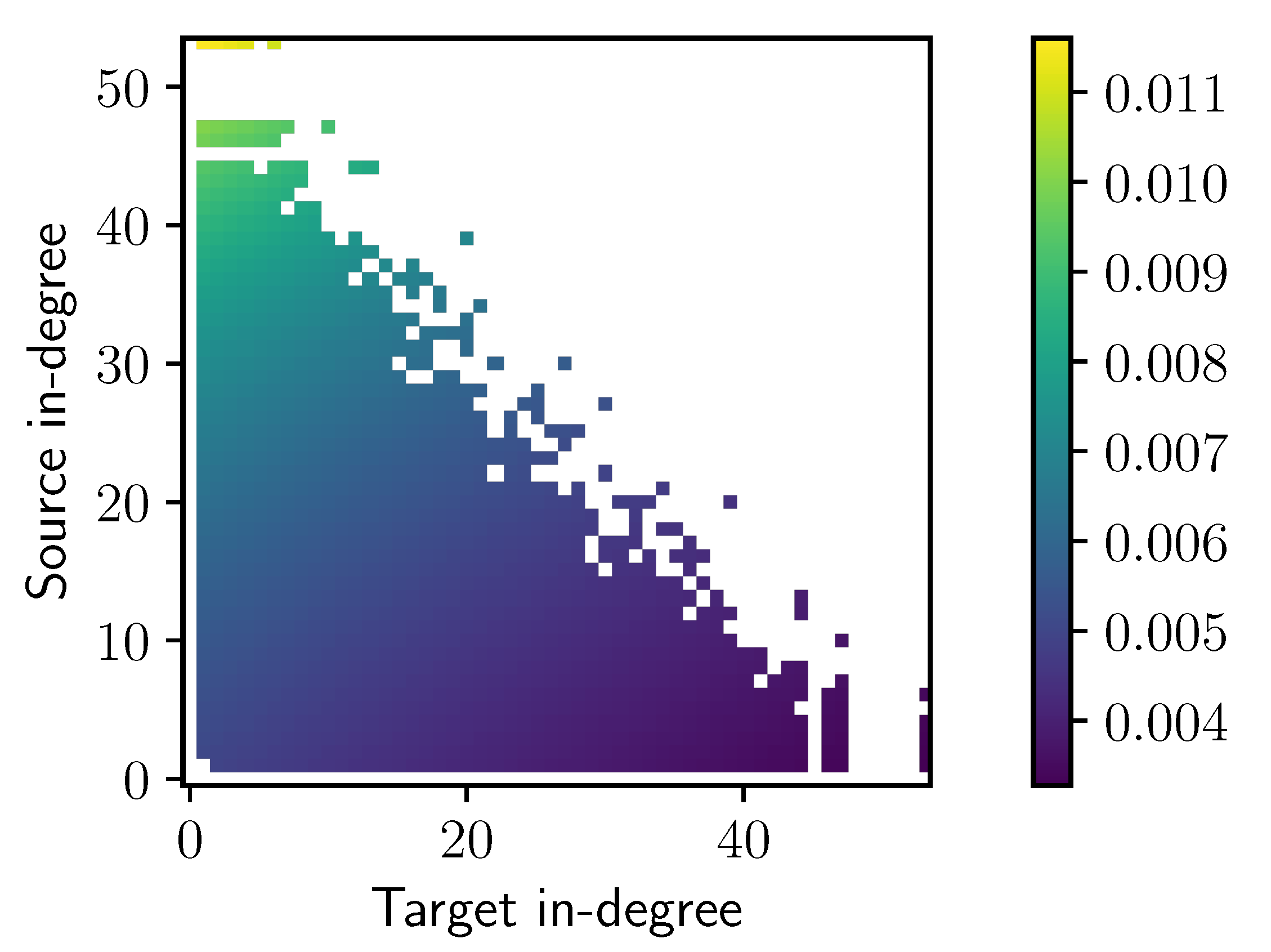

Transfer entropy is an established method for quantifying directed statistical dependencies in neuroimaging and complex systems datasets. The pairwise (or bivariate) transfer entropy from a source to a target node in a network does not depend solely on the local source-target link weight, but on the wider network structure that the link is embedded in. This relationship is studied using a discrete-time linearly-coupled Gaussian model, which allows us to derive the transfer entropy for each link from the network topology. It is shown analytically that the dependence on the directed link weight is only a first approximation, valid for weak coupling. More generally, the transfer entropy increases with the in-degree of the source and decreases with the in-degree of the target, indicating an asymmetry of information transfer between hubs and low-degree nodes. In addition, the transfer entropy is directly proportional to weighted motif counts involving common parents or multiple walks from the source to the target, which are more abundant in networks with a high clustering coefficient than in random networks. Our findings also apply to Granger causality, which is equivalent to transfer entropy for Gaussian variables. Moreover, similar empirical results on random Boolean networks suggest that the dependence of the transfer entropy on the in-degree extends to nonlinear dynamics.

PDF Abstract