DiffCSE: Difference-based Contrastive Learning for Sentence Embeddings

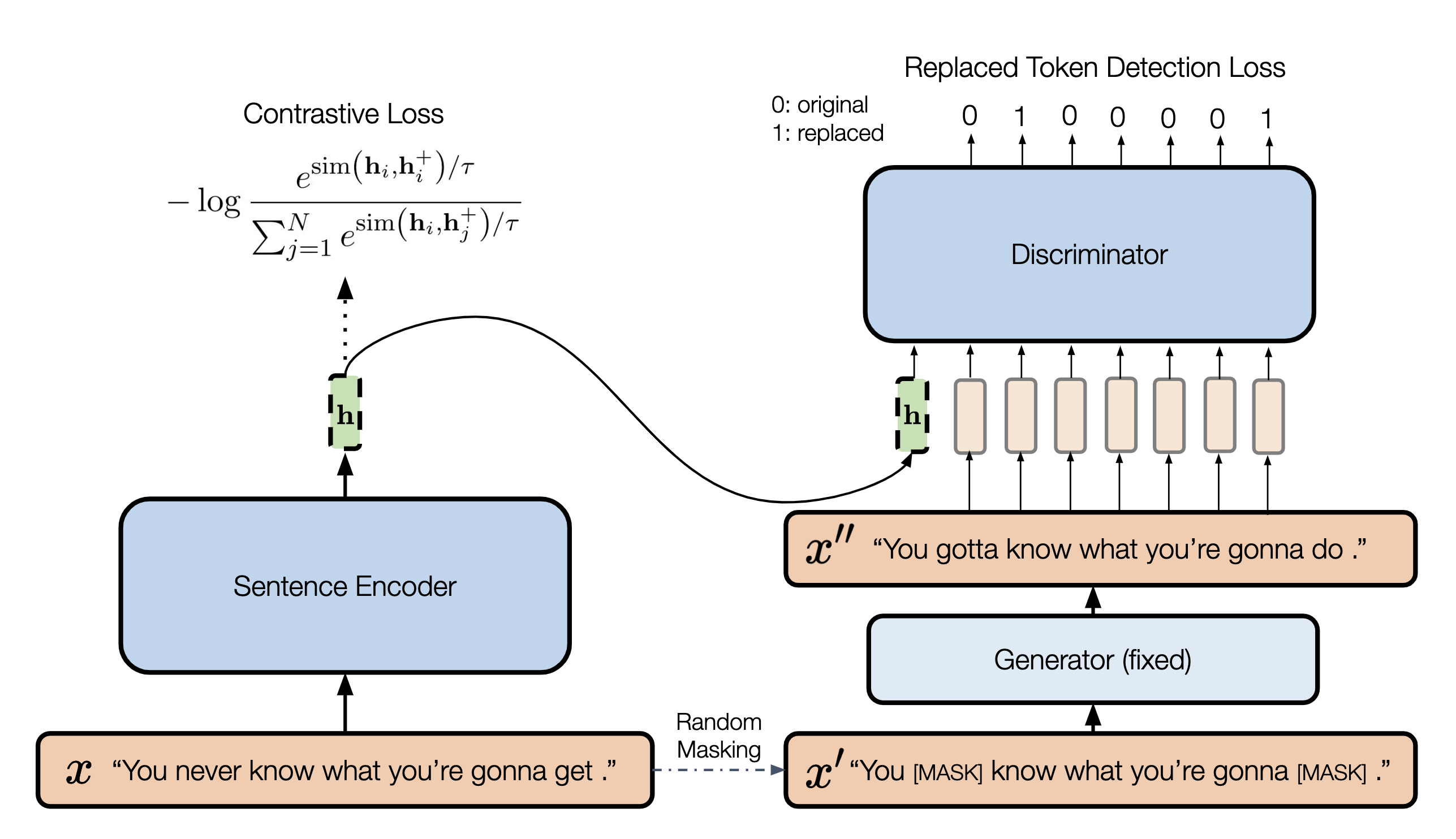

We propose DiffCSE, an unsupervised contrastive learning framework for learning sentence embeddings. DiffCSE learns sentence embeddings that are sensitive to the difference between the original sentence and an edited sentence, where the edited sentence is obtained by stochastically masking out the original sentence and then sampling from a masked language model. We show that DiffSCE is an instance of equivariant contrastive learning (Dangovski et al., 2021), which generalizes contrastive learning and learns representations that are insensitive to certain types of augmentations and sensitive to other "harmful" types of augmentations. Our experiments show that DiffCSE achieves state-of-the-art results among unsupervised sentence representation learning methods, outperforming unsupervised SimCSE by 2.3 absolute points on semantic textual similarity tasks.

PDF Abstract NAACL 2022 PDF NAACL 2022 Abstract

SST

SST

MRPC

MRPC

SICK

SICK

MPQA Opinion Corpus

MPQA Opinion Corpus

SentEval

SentEval