Discovering and Explaining the Representation Bottleneck of Graph Neural Networks from Multi-order Interactions

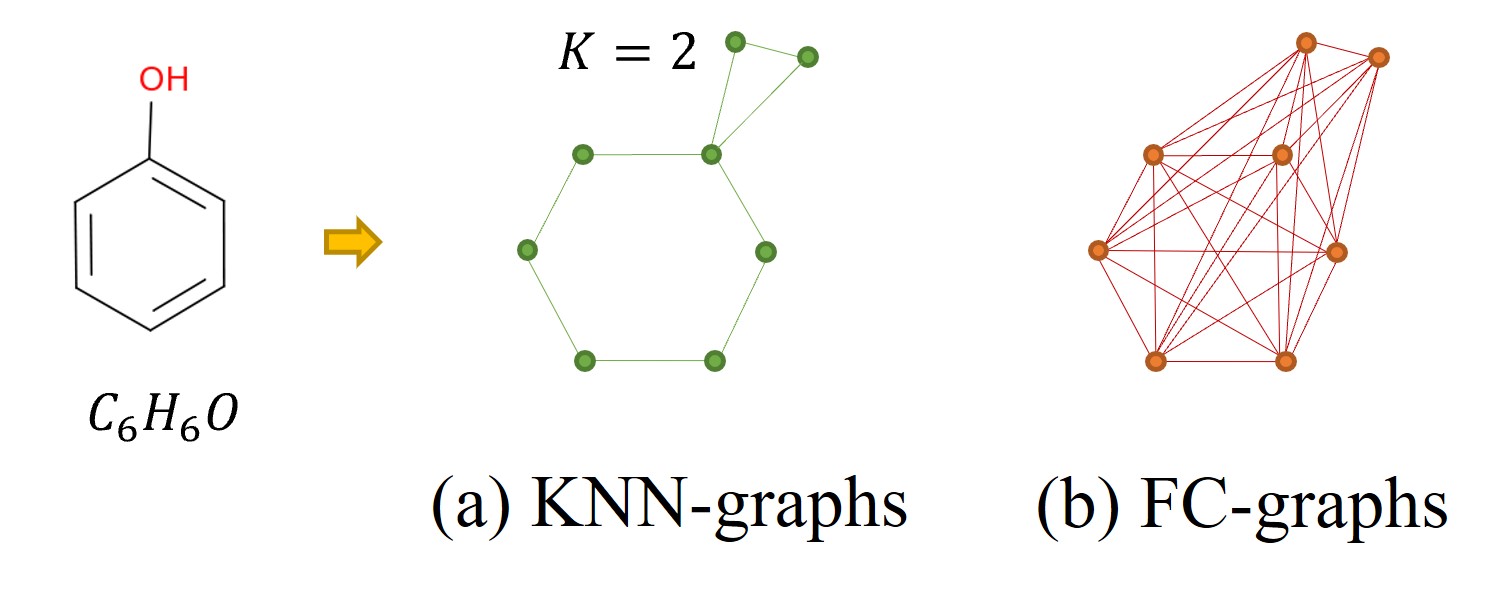

Graph neural networks (GNNs) mainly rely on the message-passing paradigm to propagate node features and build interactions, and different graph learning tasks require different ranges of node interactions. In this work, we explore the capacity of GNNs to capture interactions between nodes under contexts with different complexities. We discover that GNNs are usually unable to capture the most informative kinds of interaction styles for diverse graph learning tasks, and thus name this phenomenon as GNNs' representation bottleneck. As a response, we demonstrate that the inductive bias introduced by existing graph construction mechanisms can prevent GNNs from learning interactions of the most appropriate complexity, i.e., resulting in the representation bottleneck. To address that limitation, we propose a novel graph rewiring approach based on interaction patterns learned by GNNs to adjust the receptive fields of each node dynamically. Extensive experiments on both real-world and synthetic datasets prove the effectiveness of our algorithm to alleviate the representation bottleneck and its superiority to enhance the performance of GNNs over state-of-the-art graph rewiring baselines.

PDF Abstract

CIFAR-10

CIFAR-10