EDGE: Editable Dance Generation From Music

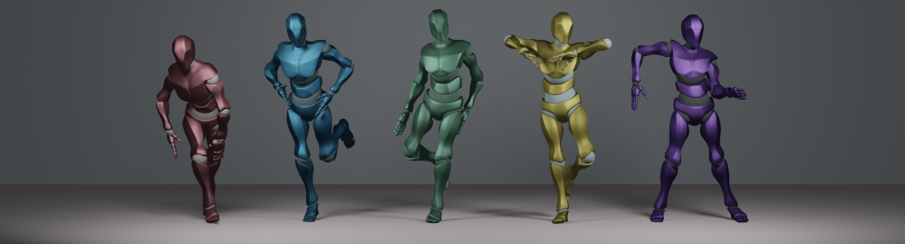

Dance is an important human art form, but creating new dances can be difficult and time-consuming. In this work, we introduce Editable Dance GEneration (EDGE), a state-of-the-art method for editable dance generation that is capable of creating realistic, physically-plausible dances while remaining faithful to the input music. EDGE uses a transformer-based diffusion model paired with Jukebox, a strong music feature extractor, and confers powerful editing capabilities well-suited to dance, including joint-wise conditioning, and in-betweening. We introduce a new metric for physical plausibility, and evaluate dance quality generated by our method extensively through (1) multiple quantitative metrics on physical plausibility, beat alignment, and diversity benchmarks, and more importantly, (2) a large-scale user study, demonstrating a significant improvement over previous state-of-the-art methods. Qualitative samples from our model can be found at our website.

PDF Abstract CVPR 2023 PDF CVPR 2023 AbstractCode

Tasks

Results from the Paper

Ranked #1 on

Motion Synthesis

on AIST++

(Beat alignment score metric)

Ranked #1 on

Motion Synthesis

on AIST++

(Beat alignment score metric)

AIST++

AIST++