Experiments on Parallel Training of Deep Neural Network using Model Averaging

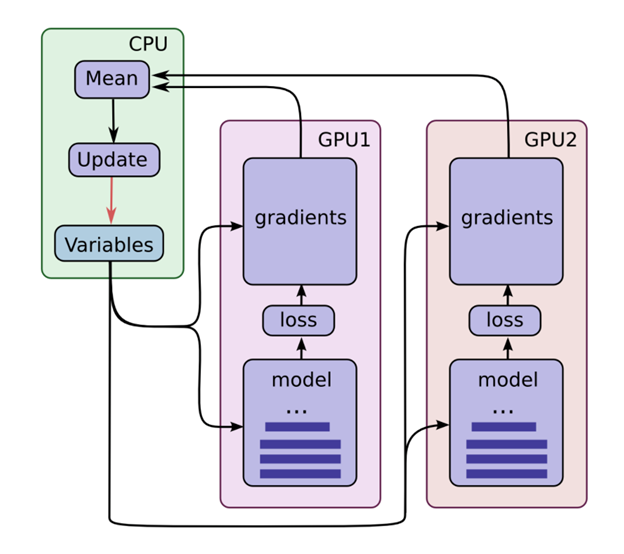

In this work we apply model averaging to parallel training of deep neural network (DNN). Parallelization is done in a model averaging manner. Data is partitioned and distributed to different nodes for local model updates, and model averaging across nodes is done every few minibatches. We use multiple GPUs for data parallelization, and Message Passing Interface (MPI) for communication between nodes, which allows us to perform model averaging frequently without losing much time on communication. We investigate the effectiveness of Natural Gradient Stochastic Gradient Descent (NG-SGD) and Restricted Boltzmann Machine (RBM) pretraining for parallel training in model-averaging framework, and explore the best setups in term of different learning rate schedules, averaging frequencies and minibatch sizes. It is shown that NG-SGD and RBM pretraining benefits parameter-averaging based model training. On the 300h Switchboard dataset, a 9.3 times speedup is achieved using 16 GPUs and 17 times speedup using 32 GPUs with limited decoding accuracy loss.

PDF Abstract