Exploring Versatile Prior for Human Motion via Motion Frequency Guidance

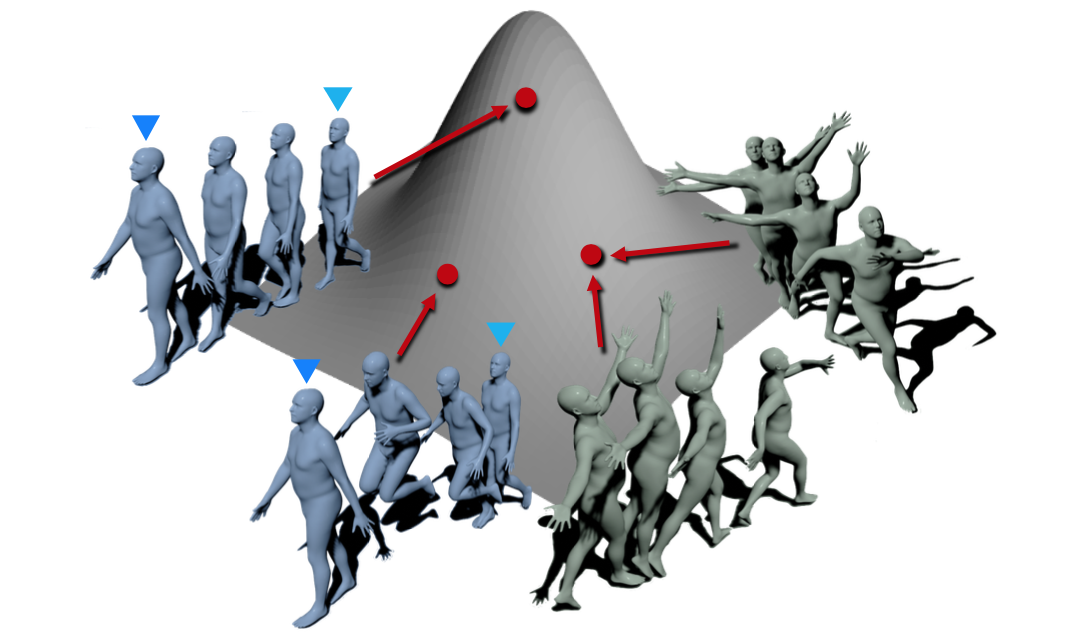

Prior plays an important role in providing the plausible constraint on human motion. Previous works design motion priors following a variety of paradigms under different circumstances, leading to the lack of versatility. In this paper, we first summarize the indispensable properties of the motion prior, and accordingly, design a framework to learn the versatile motion prior, which models the inherent probability distribution of human motions. Specifically, for efficient prior representation learning, we propose a global orientation normalization to remove redundant environment information in the original motion data space. Also, a two-level, sequence-based and segment-based, frequency guidance is introduced into the encoding stage. Then, we adopt a denoising training scheme to disentangle the environment information from input motion data in a learnable way, so as to generate consistent and distinguishable representation. Embedding our motion prior into prevailing backbones on three different tasks, we conduct extensive experiments, and both quantitative and qualitative results demonstrate the versatility and effectiveness of our motion prior. Our model and code are available at https://github.com/JchenXu/human-motion-prior.

PDF Abstract

3DPW

3DPW

AMASS

AMASS

BABEL

BABEL