Extending Maps with Semantic and Contextual Object Information for Robot Navigation: a Learning-Based Framework using Visual and Depth Cues

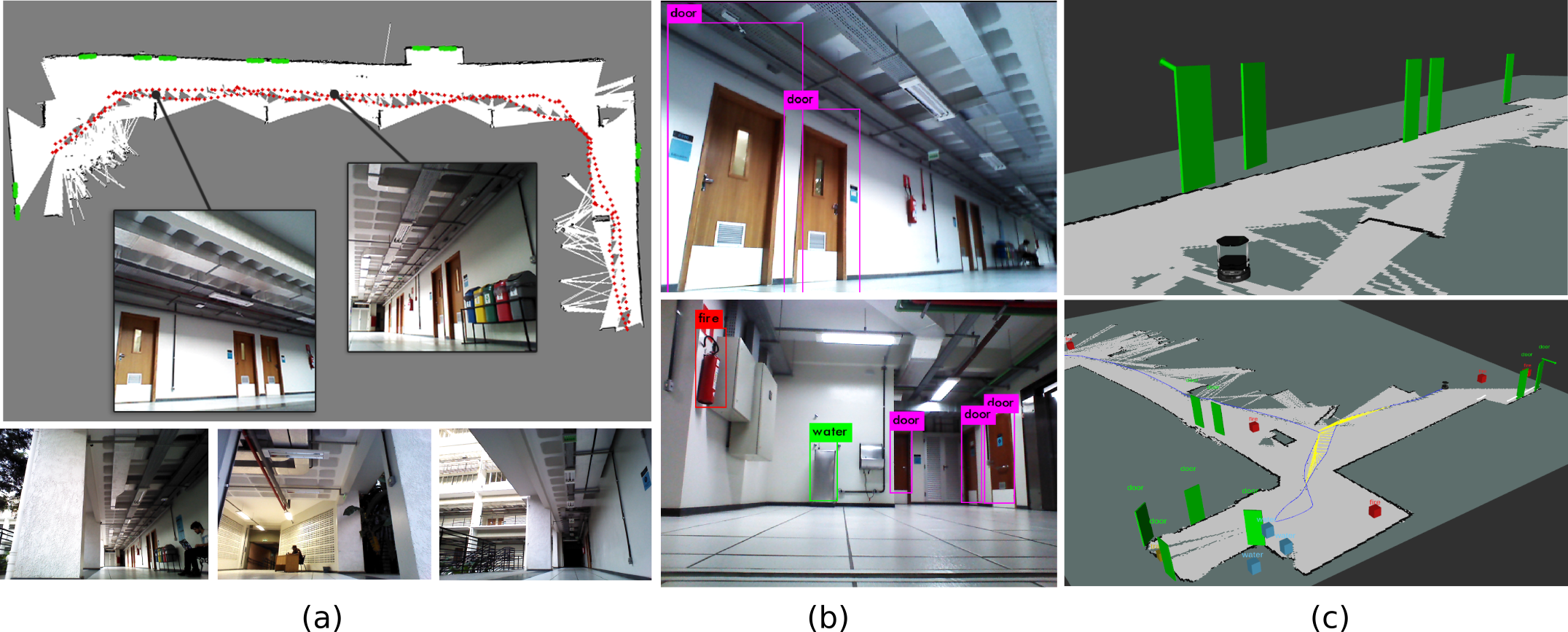

This paper addresses the problem of building augmented metric representations of scenes with semantic information from RGB-D images. We propose a complete framework to create an enhanced map representation of the environment with object-level information to be used in several applications such as human-robot interaction, assistive robotics, visual navigation, or in manipulation tasks. Our formulation leverages a CNN-based object detector (Yolo) with a 3D model-based segmentation technique to perform instance semantic segmentation, and to localize, identify, and track different classes of objects in the scene. The tracking and positioning of semantic classes is done with a dictionary of Kalman filters in order to combine sensor measurements over time and then providing more accurate maps. The formulation is designed to identify and to disregard dynamic objects in order to obtain a medium-term invariant map representation. The proposed method was evaluated with collected and publicly available RGB-D data sequences acquired in different indoor scenes. Experimental results show the potential of the technique to produce augmented semantic maps containing several objects (notably doors). We also provide to the community a dataset composed of annotated object classes (doors, fire extinguishers, benches, water fountains) and their positioning, as well as the source code as ROS packages.

PDF Abstract