FAT-DeepFFM: Field Attentive Deep Field-aware Factorization Machine

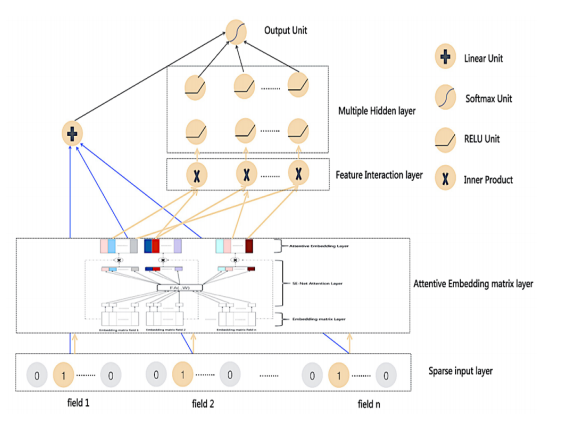

Click through rate (CTR) estimation is a fundamental task in personalized advertising and recommender systems. Recent years have witnessed the success of both the deep learning based model and attention mechanism in various tasks in computer vision (CV) and natural language processing (NLP). How to combine the attention mechanism with deep CTR model is a promising direction because it may ensemble the advantages of both sides. Although some CTR model such as Attentional Factorization Machine (AFM) has been proposed to model the weight of second order interaction features, we posit the evaluation of feature importance before explicit feature interaction procedure is also important for CTR prediction tasks because the model can learn to selectively highlight the informative features and suppress less useful ones if the task has many input features. In this paper, we propose a new neural CTR model named Field Attentive Deep Field-aware Factorization Machine (FAT-DeepFFM) by combining the Deep Field-aware Factorization Machine (DeepFFM) with Compose-Excitation network (CENet) field attention mechanism which is proposed by us as an enhanced version of Squeeze-Excitation network (SENet) to highlight the feature importance. We conduct extensive experiments on two real-world datasets and the experiment results show that FAT-DeepFFM achieves the best performance and obtains different improvements over the state-of-the-art methods. We also compare two kinds of attention mechanisms (attention before explicit feature interaction vs. attention after explicit feature interaction) and demonstrate that the former one outperforms the latter one significantly.

PDF Abstract

Criteo

Criteo