Focal Self-attention for Local-Global Interactions in Vision Transformers

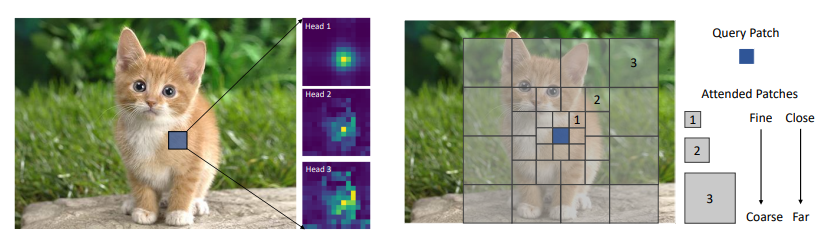

Recently, Vision Transformer and its variants have shown great promise on various computer vision tasks. The ability of capturing short- and long-range visual dependencies through self-attention is arguably the main source for the success. But it also brings challenges due to quadratic computational overhead, especially for the high-resolution vision tasks (e.g., object detection). In this paper, we present focal self-attention, a new mechanism that incorporates both fine-grained local and coarse-grained global interactions. Using this new mechanism, each token attends the closest surrounding tokens at fine granularity but the tokens far away at coarse granularity, and thus can capture both short- and long-range visual dependencies efficiently and effectively. With focal self-attention, we propose a new variant of Vision Transformer models, called Focal Transformer, which achieves superior performance over the state-of-the-art vision Transformers on a range of public image classification and object detection benchmarks. In particular, our Focal Transformer models with a moderate size of 51.1M and a larger size of 89.8M achieve 83.5 and 83.8 Top-1 accuracy, respectively, on ImageNet classification at 224x224 resolution. Using Focal Transformers as the backbones, we obtain consistent and substantial improvements over the current state-of-the-art Swin Transformers for 6 different object detection methods trained with standard 1x and 3x schedules. Our largest Focal Transformer yields 58.7/58.9 box mAPs and 50.9/51.3 mask mAPs on COCO mini-val/test-dev, and 55.4 mIoU on ADE20K for semantic segmentation, creating new SoTA on three of the most challenging computer vision tasks.

PDF Abstract

ImageNet

ImageNet

MS COCO

MS COCO

ADE20K

ADE20K