Generalized Zero-Shot Learning via VAE-Conditioned Generative Flow

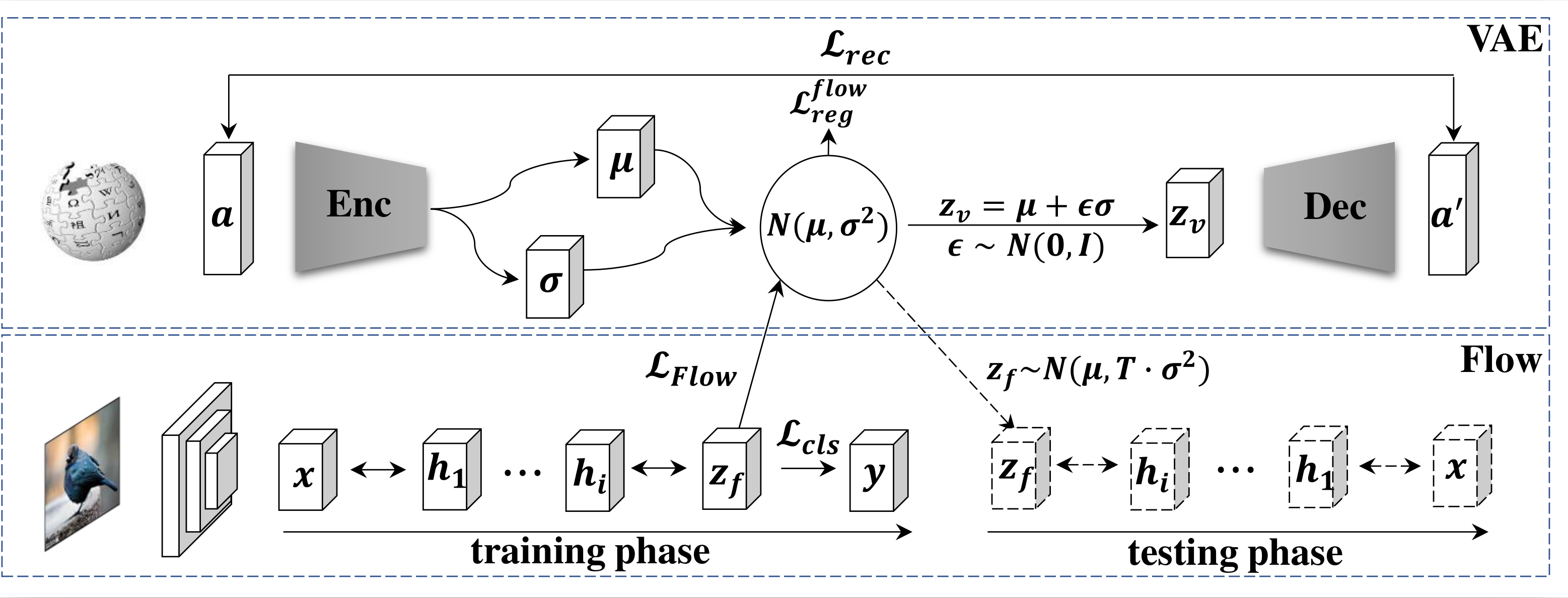

Generalized zero-shot learning (GZSL) aims to recognize both seen and unseen classes by transferring knowledge from semantic descriptions to visual representations. Recent generative methods formulate GZSL as a missing data problem, which mainly adopts GANs or VAEs to generate visual features for unseen classes. However, GANs often suffer from instability, and VAEs can only optimize the lower bound on the log-likelihood of observed data. To overcome the above limitations, we resort to generative flows, a family of generative models with the advantage of accurate likelihood estimation. More specifically, we propose a conditional version of generative flows for GZSL, i.e., VAE-Conditioned Generative Flow (VAE-cFlow). By using VAE, the semantic descriptions are firstly encoded into tractable latent distributions, conditioned on that the generative flow optimizes the exact log-likelihood of the observed visual features. We ensure the conditional latent distribution to be both semantic meaningful and inter-class discriminative by i) adopting the VAE reconstruction objective, ii) releasing the zero-mean constraint in VAE posterior regularization, and iii) adding a classification regularization on the latent variables. Our method achieves state-of-the-art GZSL results on five well-known benchmark datasets, especially for the significant improvement in the large-scale setting. Code is released at https://github.com/guyuchao/VAE-cFlow-ZSL.

PDF Abstract

ImageNet

ImageNet

CUB-200-2011

CUB-200-2011