HalluciNet-ing Spatiotemporal Representations Using a 2D-CNN

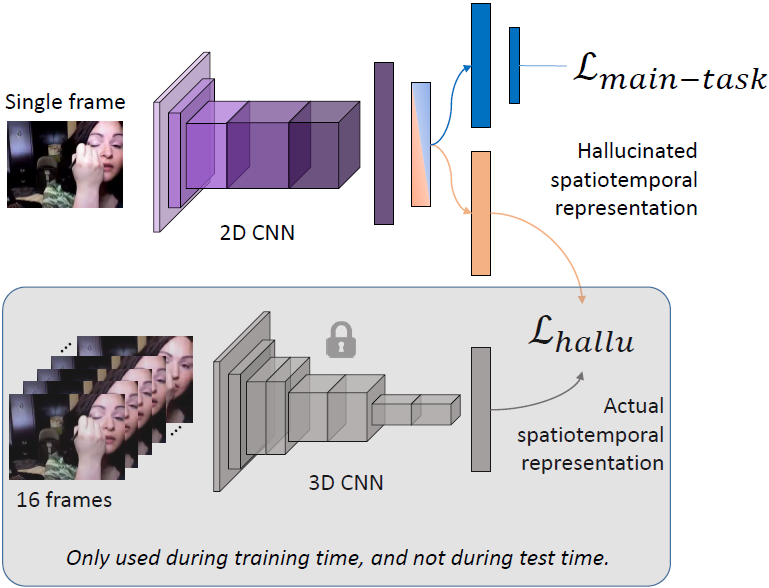

Spatiotemporal representations learned using 3D convolutional neural networks (CNN) are currently used in state-of-the-art approaches for action related tasks. However, 3D-CNN are notorious for being memory and compute resource intensive as compared with more simple 2D-CNN architectures. We propose to hallucinate spatiotemporal representations from a 3D-CNN teacher with a 2D-CNN student. By requiring the 2D-CNN to predict the future and intuit upcoming activity, it is encouraged to gain a deeper understanding of actions and how they evolve. The hallucination task is treated as an auxiliary task, which can be used with any other action related task in a multitask learning setting. Thorough experimental evaluation shows that the hallucination task indeed helps improve performance on action recognition, action quality assessment, and dynamic scene recognition tasks. From a practical standpoint, being able to hallucinate spatiotemporal representations without an actual 3D-CNN can enable deployment in resource-constrained scenarios, such as with limited computing power and/or lower bandwidth. Codebase is available here: https://github.com/ParitoshParmar/HalluciNet.

PDF Abstract

UCF101

UCF101

HMDB51

HMDB51

MTL-AQA

MTL-AQA