Improving Video Instance Segmentation via Temporal Pyramid Routing

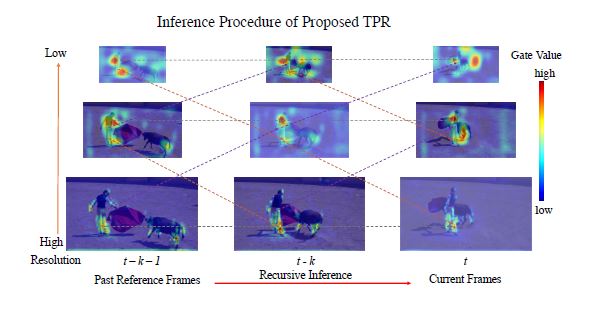

Video Instance Segmentation (VIS) is a new and inherently multi-task problem, which aims to detect, segment, and track each instance in a video sequence. Existing approaches are mainly based on single-frame features or single-scale features of multiple frames, where either temporal information or multi-scale information is ignored. To incorporate both temporal and scale information, we propose a Temporal Pyramid Routing (TPR) strategy to conditionally align and conduct pixel-level aggregation from a feature pyramid pair of two adjacent frames. Specifically, TPR contains two novel components, including Dynamic Aligned Cell Routing (DACR) and Cross Pyramid Routing (CPR), where DACR is designed for aligning and gating pyramid features across temporal dimension, while CPR transfers temporally aggregated features across scale dimension. Moreover, our approach is a light-weight and plug-and-play module and can be easily applied to existing instance segmentation methods. Extensive experiments on three datasets including YouTube-VIS (2019, 2021) and Cityscapes-VPS demonstrate the effectiveness and efficiency of the proposed approach on several state-of-the-art video instance and panoptic segmentation methods. Codes will be publicly available at \url{https://github.com/lxtGH/TemporalPyramidRouting}.

PDF Abstract

MS COCO

MS COCO

YouTube-VIS 2019

YouTube-VIS 2019

Cityscapes-VPS

Cityscapes-VPS