Inferring the Reader: Guiding Automated Story Generation with Commonsense Reasoning

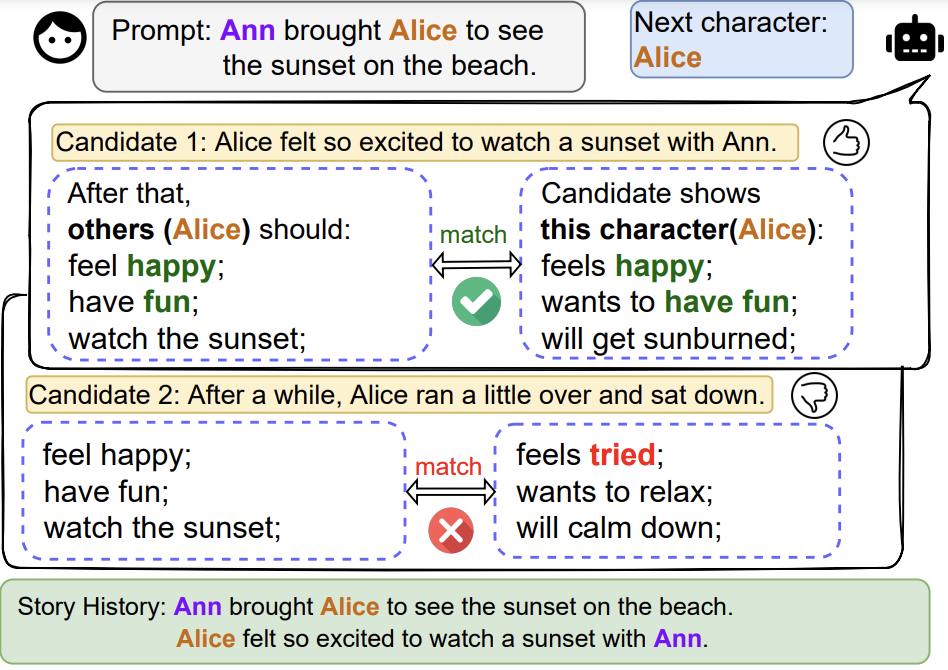

Transformer-based language model approaches to automated story generation currently provide state-of-the-art results. However, they still suffer from plot incoherence when generating narratives over time, and critically lack basic commonsense reasoning. Furthermore, existing methods generally focus only on single-character stories, or fail to track characters at all. To improve the coherence of generated narratives and to expand the scope of character-centric narrative generation, we introduce Commonsense-inference Augmented neural StoryTelling (CAST), a framework for introducing commonsense reasoning into the generation process with the option to model the interaction between multiple characters. We find that our CAST method produces significantly more coherent, on-topic, enjoyable and fluent stories than existing models in both the single-character and two-character settings in three storytelling domains.

PDF Abstract

ATOMIC

ATOMIC

ROCStories

ROCStories