Interactively Picking Real-World Objects with Unconstrained Spoken Language Instructions

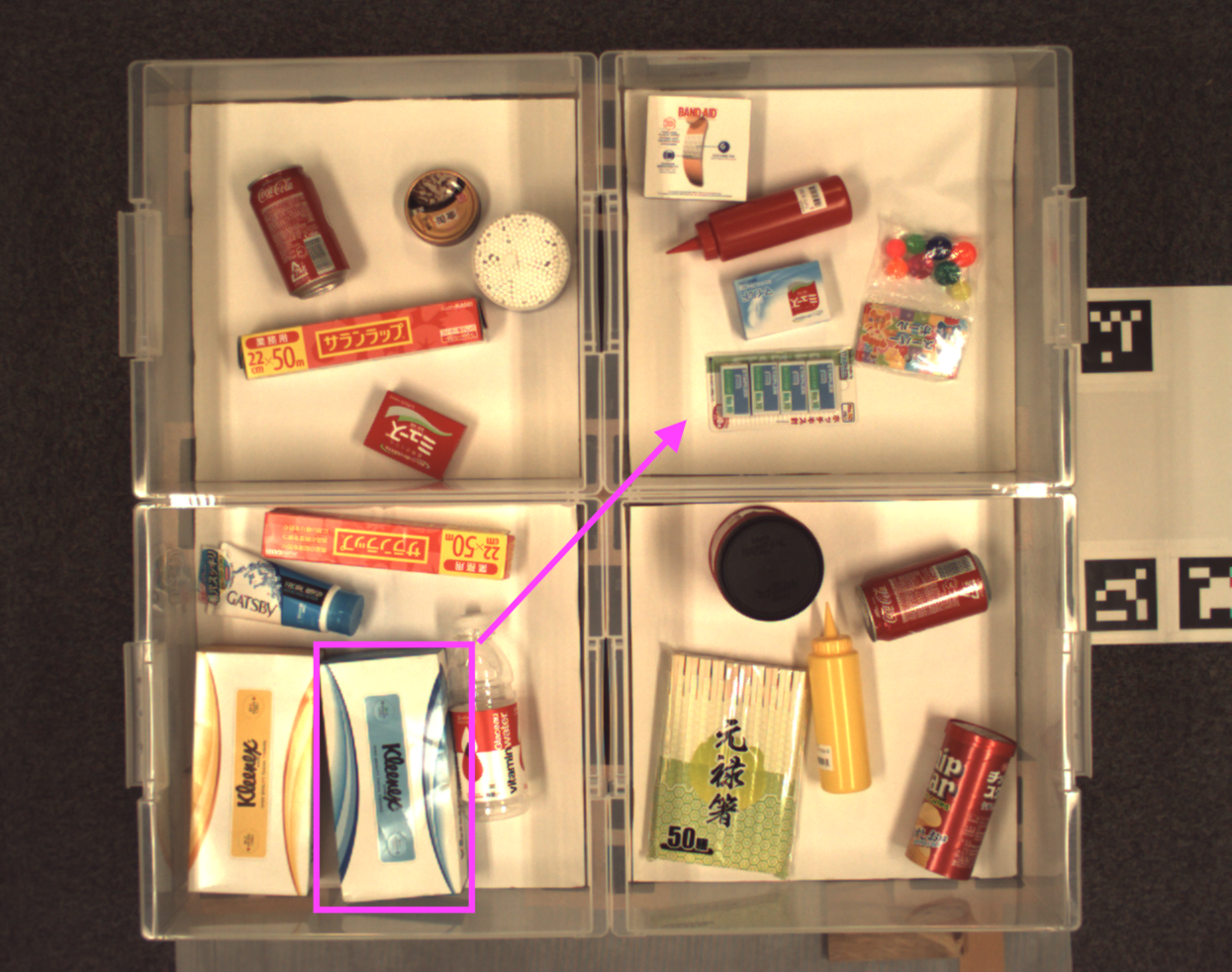

Comprehension of spoken natural language is an essential component for robots to communicate with human effectively. However, handling unconstrained spoken instructions is challenging due to (1) complex structures including a wide variety of expressions used in spoken language and (2) inherent ambiguity in interpretation of human instructions. In this paper, we propose the first comprehensive system that can handle unconstrained spoken language and is able to effectively resolve ambiguity in spoken instructions. Specifically, we integrate deep-learning-based object detection together with natural language processing technologies to handle unconstrained spoken instructions, and propose a method for robots to resolve instruction ambiguity through dialogue. Through our experiments on both a simulated environment as well as a physical industrial robot arm, we demonstrate the ability of our system to understand natural instructions from human operators effectively, and how higher success rates of the object picking task can be achieved through an interactive clarification process.

PDF Abstract

PFN-PIC

PFN-PIC

MS COCO

MS COCO

ssd

ssd