Is Synthetic Data From Diffusion Models Ready for Knowledge Distillation?

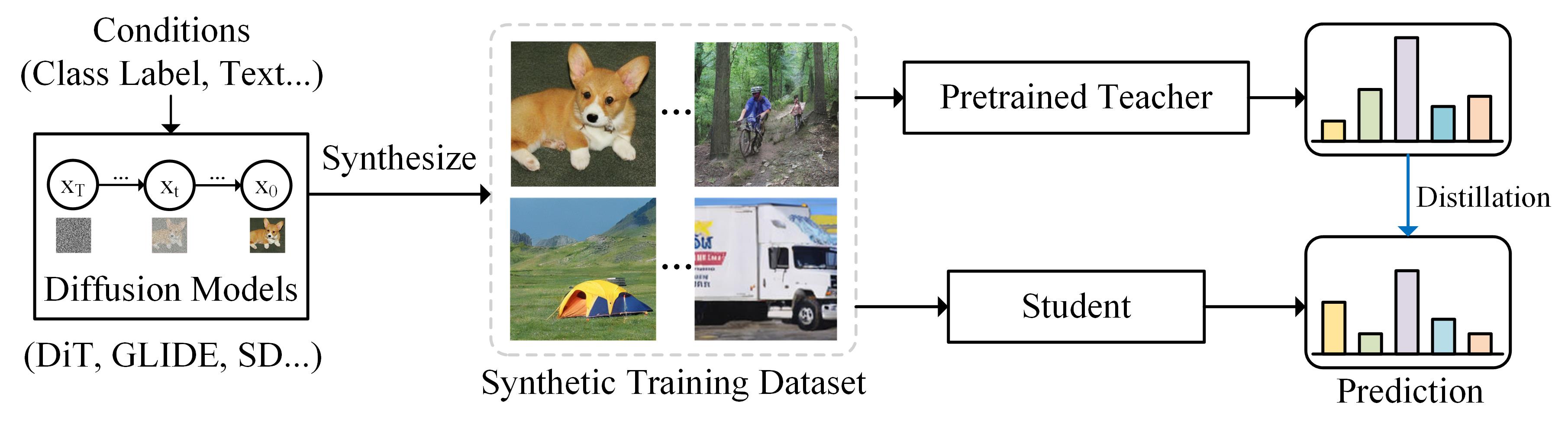

Diffusion models have recently achieved astonishing performance in generating high-fidelity photo-realistic images. Given their huge success, it is still unclear whether synthetic images are applicable for knowledge distillation when real images are unavailable. In this paper, we extensively study whether and how synthetic images produced from state-of-the-art diffusion models can be used for knowledge distillation without access to real images, and obtain three key conclusions: (1) synthetic data from diffusion models can easily lead to state-of-the-art performance among existing synthesis-based distillation methods, (2) low-fidelity synthetic images are better teaching materials, and (3) relatively weak classifiers are better teachers. Code is available at https://github.com/zhengli97/DM-KD.

PDF Abstract

ImageNet

ImageNet

CIFAR-100

CIFAR-100