Joint Feature Learning and Relation Modeling for Tracking: A One-Stream Framework

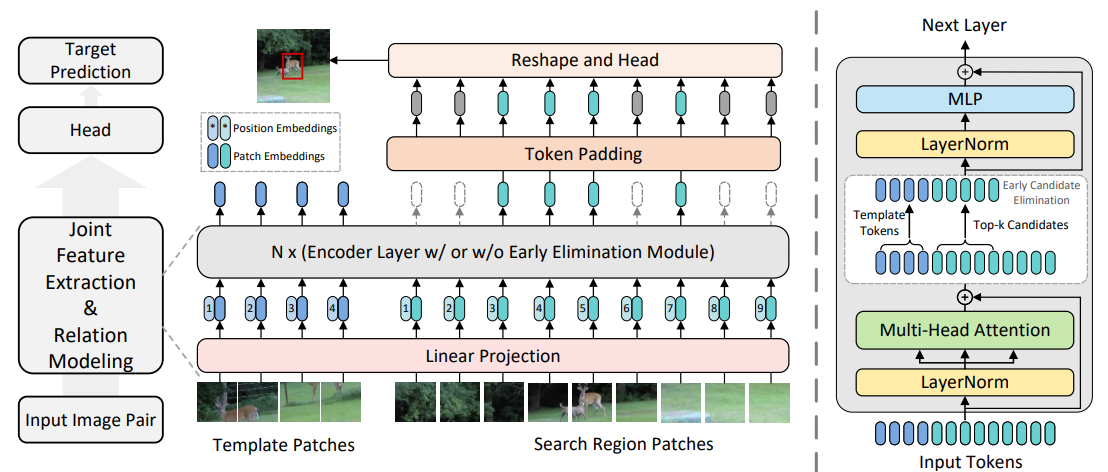

The current popular two-stream, two-stage tracking framework extracts the template and the search region features separately and then performs relation modeling, thus the extracted features lack the awareness of the target and have limited target-background discriminability. To tackle the above issue, we propose a novel one-stream tracking (OSTrack) framework that unifies feature learning and relation modeling by bridging the template-search image pairs with bidirectional information flows. In this way, discriminative target-oriented features can be dynamically extracted by mutual guidance. Since no extra heavy relation modeling module is needed and the implementation is highly parallelized, the proposed tracker runs at a fast speed. To further improve the inference efficiency, an in-network candidate early elimination module is proposed based on the strong similarity prior calculated in the one-stream framework. As a unified framework, OSTrack achieves state-of-the-art performance on multiple benchmarks, in particular, it shows impressive results on the one-shot tracking benchmark GOT-10k, i.e., achieving 73.7% AO, improving the existing best result (SwinTrack) by 4.3\%. Besides, our method maintains a good performance-speed trade-off and shows faster convergence. The code and models are available at https://github.com/botaoye/OSTrack.

PDF Abstract

ImageNet

ImageNet

LaSOT

LaSOT

GOT-10k

GOT-10k

TrackingNet

TrackingNet

TNL2K

TNL2K

COESOT

COESOT